In

our pilot research, we draped a skinny, versatile electrode array over the floor of the volunteer’s mind. The electrodes recorded neural alerts and despatched them to a speech decoder, which translated the alerts into the phrases the person supposed to say. It was the primary time a paralyzed one who couldn’t communicate had used neurotechnology to broadcast complete phrases—not simply letters—from the mind.

That trial was the fruits of greater than a decade of analysis on the underlying mind mechanisms that govern speech, and we’re enormously happy with what we’ve achieved to this point. However we’re simply getting began.

My lab at UCSF is working with colleagues all over the world to make this know-how secure, secure, and dependable sufficient for on a regular basis use at house. We’re additionally working to enhance the system’s efficiency so will probably be well worth the effort.

How neuroprosthetics work

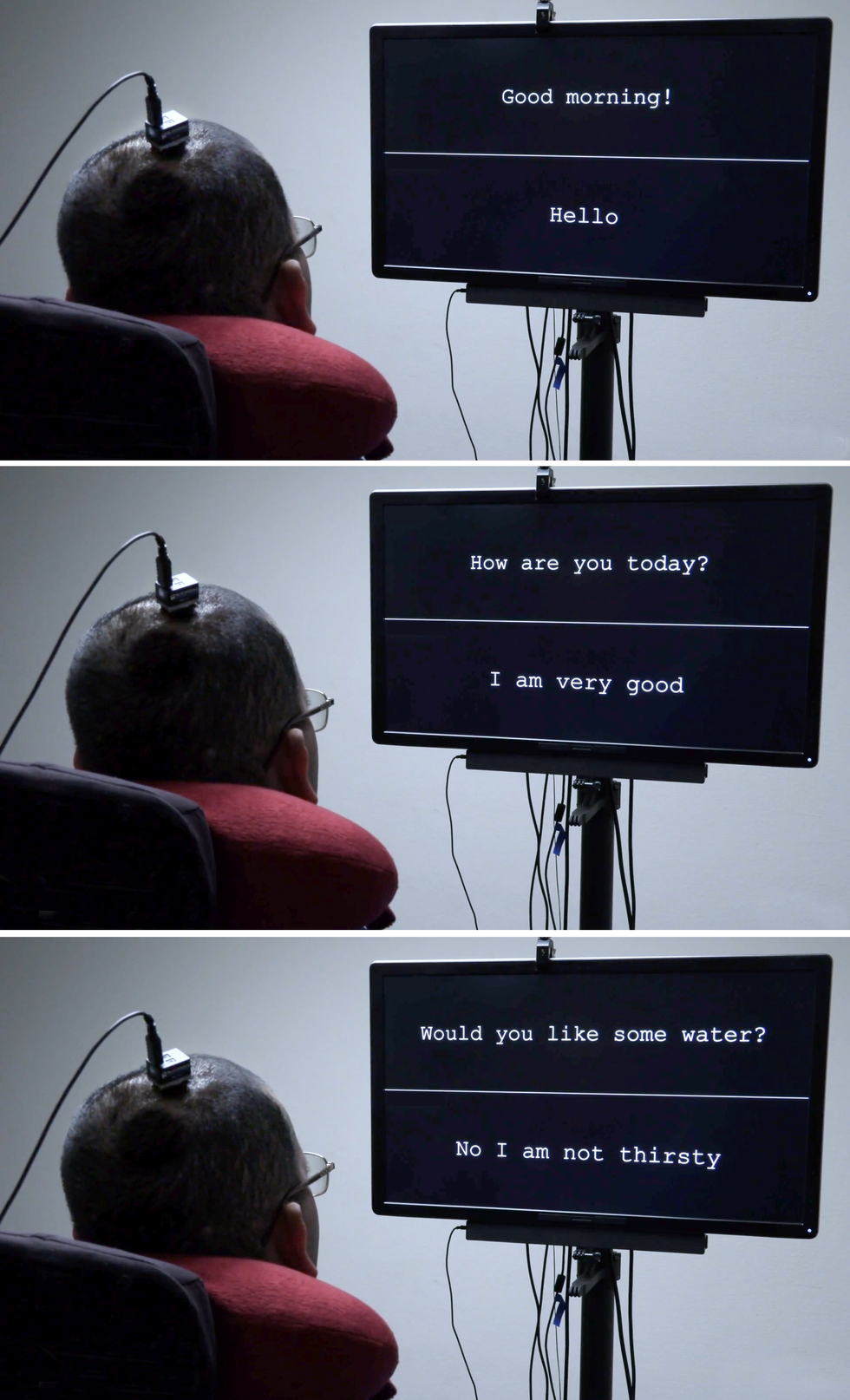

The primary model of the brain-computer interface gave the volunteer a vocabulary of fifty sensible phrases. College of California, San Francisco

The primary model of the brain-computer interface gave the volunteer a vocabulary of fifty sensible phrases. College of California, San Francisco

Neuroprosthetics have come a great distance prior to now 20 years. Prosthetic implants for listening to have superior the furthest, with designs that interface with the

cochlear nerve of the interior ear or immediately into the auditory mind stem. There’s additionally appreciable analysis on retinal and mind implants for imaginative and prescient, in addition to efforts to provide folks with prosthetic arms a way of contact. All of those sensory prosthetics take info from the skin world and convert it into electrical alerts that feed into the mind’s processing facilities.

The alternative type of neuroprosthetic information {the electrical} exercise of the mind and converts it into alerts that management one thing within the exterior world, similar to a

robotic arm, a video-game controller, or a cursor on a pc display screen. That final management modality has been utilized by teams such because the BrainGate consortium to allow paralyzed folks to sort phrases—generally one letter at a time, generally utilizing an autocomplete perform to hurry up the method.

For that typing-by-brain perform, an implant is often positioned within the motor cortex, the a part of the mind that controls motion. Then the consumer imagines sure bodily actions to manage a cursor that strikes over a digital keyboard. One other method, pioneered by a few of my collaborators in a

2021 paper, had one consumer think about that he was holding a pen to paper and was writing letters, creating alerts within the motor cortex that have been translated into textual content. That method set a brand new document for pace, enabling the volunteer to write down about 18 phrases per minute.

In my lab’s analysis, we’ve taken a extra formidable method. As an alternative of decoding a consumer’s intent to maneuver a cursor or a pen, we decode the intent to manage the vocal tract, comprising dozens of muscle groups governing the larynx (generally known as the voice field), the tongue, and the lips.

The seemingly easy conversational setup for the paralyzed man [in pink shirt] is enabled by each subtle neurotech {hardware} and machine-learning techniques that decode his mind alerts. College of California, San Francisco

The seemingly easy conversational setup for the paralyzed man [in pink shirt] is enabled by each subtle neurotech {hardware} and machine-learning techniques that decode his mind alerts. College of California, San Francisco

I started working on this space greater than 10 years in the past. As a neurosurgeon, I might usually see sufferers with extreme accidents that left them unable to talk. To my shock, in lots of circumstances the areas of mind accidents didn’t match up with the syndromes I realized about in medical faculty, and I spotted that we nonetheless have so much to find out about how language is processed within the mind. I made a decision to check the underlying neurobiology of language and, if attainable, to develop a brain-machine interface (BMI) to revive communication for individuals who have misplaced it. Along with my neurosurgical background, my workforce has experience in linguistics, electrical engineering, pc science, bioengineering, and drugs. Our ongoing scientific trial is testing each {hardware} and software program to discover the boundaries of our BMI and decide what sort of speech we will restore to folks.

The muscle groups concerned in speech

Speech is likely one of the behaviors that

units people aside. Loads of different species vocalize, however solely people mix a set of sounds in myriad other ways to characterize the world round them. It’s additionally an awfully difficult motor act—some specialists imagine it’s essentially the most complicated motor motion that individuals carry out. Talking is a product of modulated air movement by means of the vocal tract; with each utterance we form the breath by creating audible vibrations in our laryngeal vocal folds and altering the form of the lips, jaw, and tongue.

Most of the muscle groups of the vocal tract are fairly not like the joint-based muscle groups similar to these within the legs and arms, which might transfer in just a few prescribed methods. For instance, the muscle that controls the lips is a sphincter, whereas the muscle groups that make up the tongue are ruled extra by hydraulics—the tongue is essentially composed of a set quantity of muscular tissue, so transferring one a part of the tongue modifications its form elsewhere. The physics governing the actions of such muscle groups is completely totally different from that of the biceps or hamstrings.

As a result of there are such a lot of muscle groups concerned and so they every have so many levels of freedom, there’s basically an infinite variety of attainable configurations. However when folks communicate, it seems they use a comparatively small set of core actions (which differ considerably in several languages). For instance, when English audio system make the “d” sound, they put their tongues behind their tooth; after they make the “ok” sound, the backs of their tongues go as much as contact the ceiling of the again of the mouth. Few individuals are aware of the exact, complicated, and coordinated muscle actions required to say the only phrase.

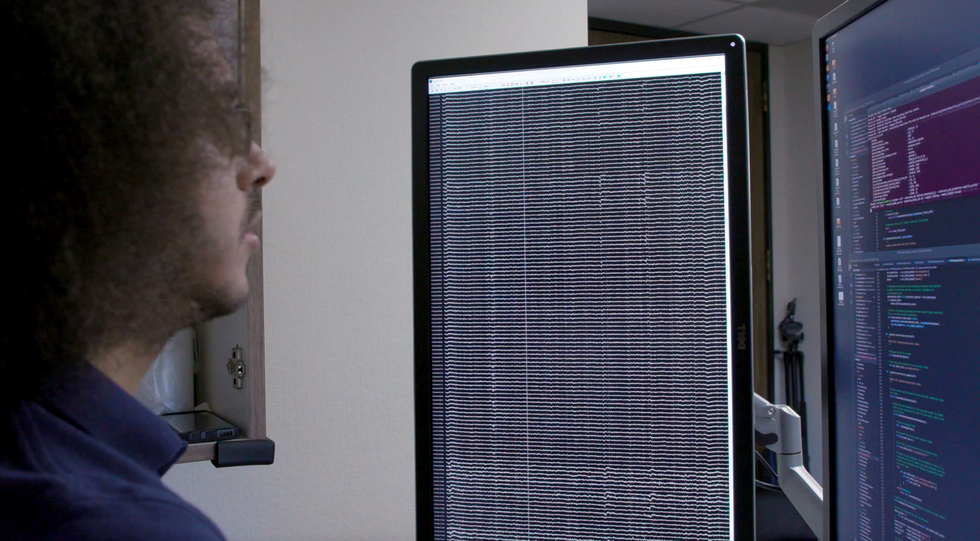

Workforce member David Moses seems at a readout of the affected person’s mind waves [left screen] and a show of the decoding system’s exercise [right screen].College of California, San Francisco

Workforce member David Moses seems at a readout of the affected person’s mind waves [left screen] and a show of the decoding system’s exercise [right screen].College of California, San Francisco

My analysis group focuses on the elements of the mind’s motor cortex that ship motion instructions to the muscle groups of the face, throat, mouth, and tongue. These mind areas are multitaskers: They handle muscle actions that produce speech and in addition the actions of those self same muscle groups for swallowing, smiling, and kissing.

Learning the neural exercise of these areas in a helpful method requires each spatial decision on the size of millimeters and temporal decision on the size of milliseconds. Traditionally, noninvasive imaging techniques have been in a position to present one or the opposite, however not each. After we began this analysis, we discovered remarkably little information on how mind exercise patterns have been related to even the only elements of speech: phonemes and syllables.

Right here we owe a debt of gratitude to our volunteers. On the UCSF epilepsy middle, sufferers getting ready for surgical procedure usually have electrodes surgically positioned over the surfaces of their brains for a number of days so we will map the areas concerned after they have seizures. Throughout these few days of wired-up downtime, many sufferers volunteer for neurological analysis experiments that make use of the electrode recordings from their brains. My group requested sufferers to allow us to research their patterns of neural exercise whereas they spoke phrases.

The {hardware} concerned is named

electrocorticography (ECoG). The electrodes in an ECoG system don’t penetrate the mind however lie on the floor of it. Our arrays can comprise a number of hundred electrode sensors, every of which information from 1000’s of neurons. Up to now, we’ve used an array with 256 channels. Our objective in these early research was to find the patterns of cortical exercise when folks communicate easy syllables. We requested volunteers to say particular sounds and phrases whereas we recorded their neural patterns and tracked the actions of their tongues and mouths. Generally we did so by having them put on coloured face paint and utilizing a computer-vision system to extract the kinematic gestures; different instances we used an ultrasound machine positioned below the sufferers’ jaws to picture their transferring tongues.

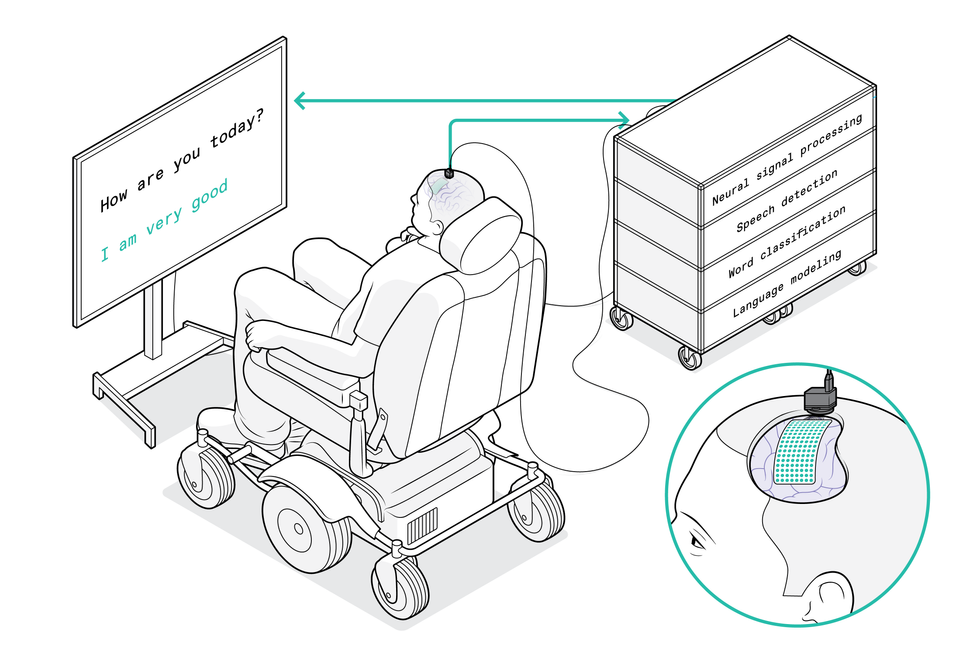

The system begins with a versatile electrode array that’s draped over the affected person’s mind to select up alerts from the motor cortex. The array particularly captures motion instructions supposed for the affected person’s vocal tract. A port affixed to the cranium guides the wires that go to the pc system, which decodes the mind alerts and interprets them into the phrases that the affected person desires to say. His solutions then seem on the show display screen.Chris Philpot

The system begins with a versatile electrode array that’s draped over the affected person’s mind to select up alerts from the motor cortex. The array particularly captures motion instructions supposed for the affected person’s vocal tract. A port affixed to the cranium guides the wires that go to the pc system, which decodes the mind alerts and interprets them into the phrases that the affected person desires to say. His solutions then seem on the show display screen.Chris Philpot

We used these techniques to match neural patterns to actions of the vocal tract. At first we had plenty of questions concerning the neural code. One chance was that neural exercise encoded instructions for specific muscle groups, and the mind basically turned these muscle groups on and off as if urgent keys on a keyboard. One other concept was that the code decided the rate of the muscle contractions. One more was that neural exercise corresponded with coordinated patterns of muscle contractions used to provide a sure sound. (For instance, to make the “aaah” sound, each the tongue and the jaw must drop.) What we found was that there’s a map of representations that controls totally different elements of the vocal tract, and that collectively the totally different mind areas mix in a coordinated method to provide rise to fluent speech.

The function of AI in at this time’s neurotech

Our work relies on the advances in synthetic intelligence over the previous decade. We are able to feed the information we collected about each neural exercise and the kinematics of speech right into a neural community, then let the machine-learning algorithm discover patterns within the associations between the 2 information units. It was attainable to make connections between neural exercise and produced speech, and to make use of this mannequin to provide computer-generated speech or textual content. However this method couldn’t practice an algorithm for paralyzed folks as a result of we’d lack half of the information: We’d have the neural patterns, however nothing concerning the corresponding muscle actions.

The smarter method to make use of machine studying, we realized, was to interrupt the issue into two steps. First, the decoder interprets alerts from the mind into supposed actions of muscle groups within the vocal tract, then it interprets these supposed actions into synthesized speech or textual content.

We name this a biomimetic method as a result of it copies biology; within the human physique, neural exercise is immediately accountable for the vocal tract’s actions and is barely not directly accountable for the sounds produced. An enormous benefit of this method comes within the coaching of the decoder for that second step of translating muscle actions into sounds. As a result of these relationships between vocal tract actions and sound are pretty common, we have been in a position to practice the decoder on giant information units derived from individuals who weren’t paralyzed.

A scientific trial to check our speech neuroprosthetic

The subsequent large problem was to carry the know-how to the individuals who might actually profit from it.

The Nationwide Institutes of Well being (NIH) is funding

our pilot trial, which started in 2021. We have already got two paralyzed volunteers with implanted ECoG arrays, and we hope to enroll extra within the coming years. The first objective is to enhance their communication, and we’re measuring efficiency when it comes to phrases per minute. A median grownup typing on a full keyboard can sort 40 phrases per minute, with the quickest typists reaching speeds of greater than 80 phrases per minute.

Edward Chang was impressed to develop a brain-to-speech system by the sufferers he encountered in his neurosurgery follow. Barbara Ries

Edward Chang was impressed to develop a brain-to-speech system by the sufferers he encountered in his neurosurgery follow. Barbara Ries

We predict that tapping into the speech system can present even higher outcomes. Human speech is way quicker than typing: An English speaker can simply say 150 phrases in a minute. We’d prefer to allow paralyzed folks to speak at a fee of 100 phrases per minute. We’ve got plenty of work to do to succeed in that objective, however we predict our method makes it a possible goal.

The implant process is routine. First the surgeon removes a small portion of the cranium; subsequent, the versatile ECoG array is gently positioned throughout the floor of the cortex. Then a small port is mounted to the cranium bone and exits by means of a separate opening within the scalp. We at present want that port, which attaches to exterior wires to transmit information from the electrodes, however we hope to make the system wi-fi sooner or later.

We’ve thought of utilizing penetrating microelectrodes, as a result of they’ll document from smaller neural populations and will due to this fact present extra element about neural exercise. However the present {hardware} isn’t as sturdy and secure as ECoG for scientific purposes, particularly over a few years.

One other consideration is that penetrating electrodes usually require day by day recalibration to show the neural alerts into clear instructions, and analysis on neural units has proven that pace of setup and efficiency reliability are key to getting folks to make use of the know-how. That’s why we’ve prioritized stability in

making a “plug and play” system for long-term use. We performed a research wanting on the variability of a volunteer’s neural alerts over time and located that the decoder carried out higher if it used information patterns throughout a number of classes and a number of days. In machine-learning phrases, we are saying that the decoder’s “weights” carried over, creating consolidated neural alerts.

College of California, San Francisco

As a result of our paralyzed volunteers can’t communicate whereas we watch their mind patterns, we requested our first volunteer to strive two totally different approaches. He began with a listing of fifty phrases which might be useful for day by day life, similar to “hungry,” “thirsty,” “please,” “assist,” and “pc.” Throughout 48 classes over a number of months, we generally requested him to only think about saying every of the phrases on the checklist, and generally requested him to overtly

strive to say them. We discovered that makes an attempt to talk generated clearer mind alerts and have been ample to coach the decoding algorithm. Then the volunteer might use these phrases from the checklist to generate sentences of his personal selecting, similar to “No I’m not thirsty.”

We’re now pushing to increase to a broader vocabulary. To make that work, we have to proceed to enhance the present algorithms and interfaces, however I’m assured these enhancements will occur within the coming months and years. Now that the proof of precept has been established, the objective is optimization. We are able to concentrate on making our system quicker, extra correct, and—most necessary— safer and extra dependable. Issues ought to transfer shortly now.

Most likely the largest breakthroughs will come if we will get a greater understanding of the mind techniques we’re attempting to decode, and the way paralysis alters their exercise. We’ve come to understand that the neural patterns of a paralyzed one who can’t ship instructions to the muscle groups of their vocal tract are very totally different from these of an epilepsy affected person who can. We’re making an attempt an formidable feat of BMI engineering whereas there’s nonetheless heaps to be taught concerning the underlying neuroscience. We imagine it’ll all come collectively to provide our sufferers their voices again.

From Your Web site Articles

Associated Articles Across the Internet