Ask a wise house machine for the climate forecast, and it takes a number of seconds for the machine to reply. One motive this latency happens is as a result of related units don’t have sufficient reminiscence or energy to retailer and run the large machine-learning fashions wanted for the machine to grasp what a person is asking of it. The mannequin is saved in an information middle that could be a whole lot of miles away, the place the reply is computed and despatched to the machine.

MIT researchers have created a brand new methodology for computing instantly on these units, which drastically reduces this latency. Their approach shifts the memory-intensive steps of working a machine-learning mannequin to a central server the place parts of the mannequin are encoded onto mild waves.

The waves are transmitted to a related machine utilizing fiber optics, which permits tons of information to be despatched lightning-fast via a community. The receiver then employs a easy optical machine that quickly performs computations utilizing the components of a mannequin carried by these mild waves.

This method results in greater than a hundredfold enchancment in vitality effectivity when in comparison with different strategies. It might additionally enhance safety, since a person’s knowledge don’t must be transferred to a central location for computation.

This methodology might allow a self-driving automobile to make choices in real-time whereas utilizing only a tiny share of the vitality presently required by power-hungry computer systems. It might additionally enable a person to have a latency-free dialog with their good house machine, be used for dwell video processing over mobile networks, and even allow high-speed picture classification on a spacecraft tens of millions of miles from Earth.

“Each time you need to run a neural community, you must run this system, and how briskly you possibly can run this system is determined by how briskly you possibly can pipe this system in from reminiscence. Our pipe is huge — it corresponds to sending a full feature-length film over the web each millisecond or so. That’s how briskly knowledge comes into our system. And it will probably compute as quick as that,” says senior creator Dirk Englund, an affiliate professor within the Division of Electrical Engineering and Laptop Science (EECS) and member of the MIT Analysis Laboratory of Electronics.

Becoming a member of Englund on the paper is lead creator and EECS grad pupil Alexander Sludds; EECS grad pupil Saumil Bandyopadhyay, Analysis Scientist Ryan Hamerly, in addition to others from MIT, the MIT Lincoln Laboratory, and Nokia Company. The analysis is revealed in the present day in Science.

Lightening the load

Neural networks are machine-learning fashions that use layers of related nodes, or neurons, to acknowledge patterns in datasets and carry out duties, like classifying pictures or recognizing speech. However these fashions can comprise billions of weight parameters, that are numeric values that remodel enter knowledge as they’re processed. These weights should be saved in reminiscence. On the similar time, the information transformation course of includes billions of algebraic computations, which require quite a lot of energy to carry out.

The method of fetching knowledge (the weights of the neural community, on this case) from reminiscence and shifting them to the components of a pc that do the precise computation is without doubt one of the greatest limiting elements to hurry and vitality effectivity, says Sludds.

“So our thought was, why don’t we take all that heavy lifting — the method of fetching billions of weights from reminiscence — transfer it away from the sting machine and put it someplace the place now we have plentiful entry to energy and reminiscence, which supplies us the flexibility to fetch these weights shortly?” he says.

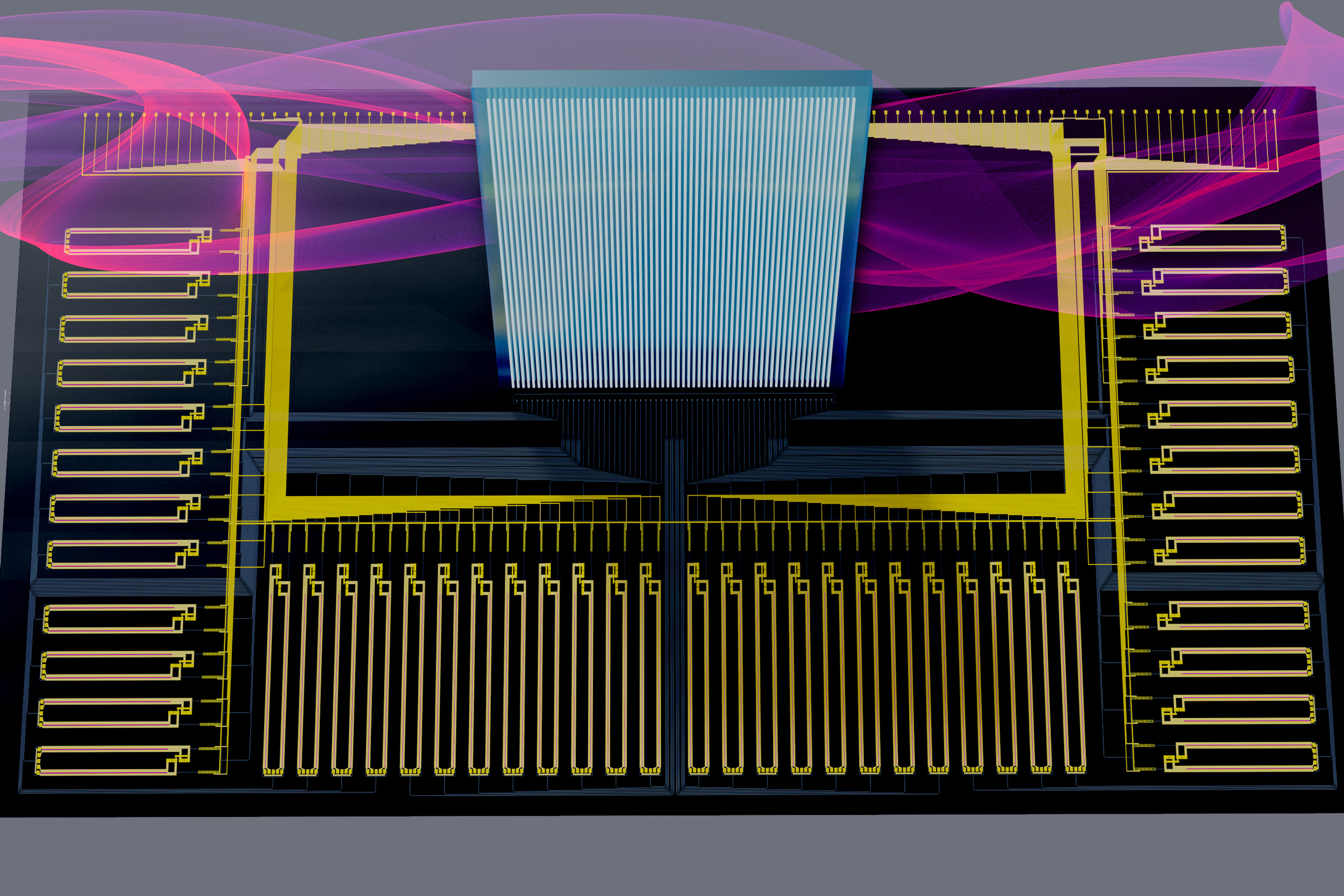

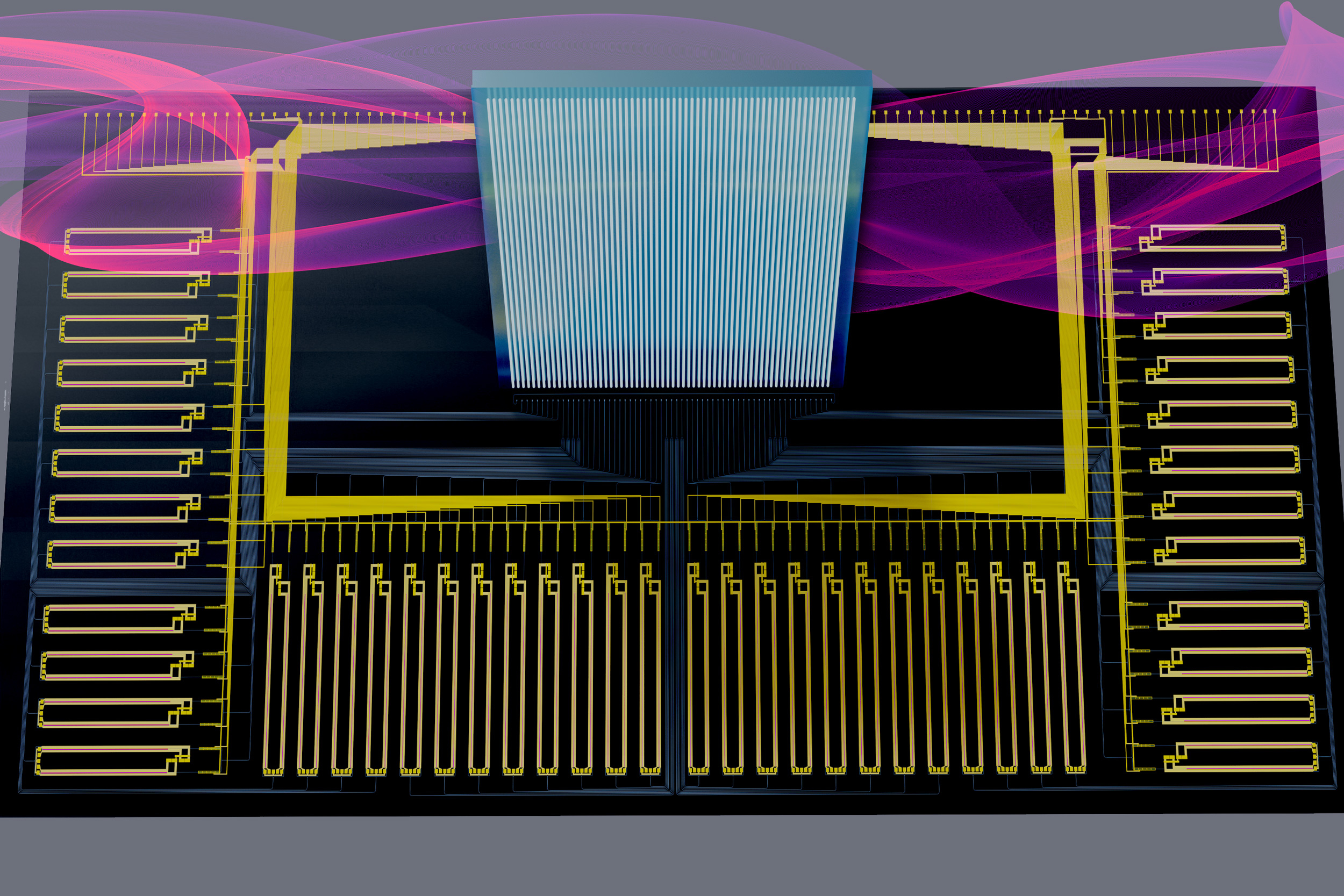

The neural community structure they developed, Netcast, includes storing weights in a central server that’s related to a novel piece of {hardware} referred to as a wise transceiver. This good transceiver, a thumb-sized chip that may obtain and transmit knowledge, makes use of know-how generally known as silicon photonics to fetch trillions of weights from reminiscence every second.

It receives weights as electrical indicators and imprints them onto mild waves. For the reason that weight knowledge are encoded as bits (1s and 0s) the transceiver converts them by switching lasers; a laser is turned on for a 1 and off for a 0. It combines these mild waves after which periodically transfers them via a fiber optic community so a shopper machine doesn’t want to question the server to obtain them.

“Optics is nice as a result of there are a lot of methods to hold knowledge inside optics. As an example, you possibly can put knowledge on totally different colours of sunshine, and that permits a a lot increased knowledge throughput and larger bandwidth than with electronics,” explains Bandyopadhyay.

Trillions per second

As soon as the sunshine waves arrive on the shopper machine, a easy optical element generally known as a broadband “Mach-Zehnder” modulator makes use of them to carry out super-fast, analog computation. This includes encoding enter knowledge from the machine, resembling sensor info, onto the weights. Then it sends every particular person wavelength to a receiver that detects the sunshine and measures the results of the computation.

The researchers devised a manner to make use of this modulator to do trillions of multiplications per second, which vastly will increase the pace of computation on the machine whereas utilizing solely a tiny quantity of energy.

“As a way to make one thing sooner, you should make it extra vitality environment friendly. However there’s a trade-off. We’ve constructed a system that may function with a few milliwatt of energy however nonetheless do trillions of multiplications per second. When it comes to each pace and vitality effectivity, that may be a achieve of orders of magnitude,” Sludds says.

They examined this structure by sending weights over an 86-kilometer fiber that connects their lab to MIT Lincoln Laboratory. Netcast enabled machine-learning with excessive accuracy — 98.7 p.c for picture classification and 98.8 p.c for digit recognition — at fast speeds.

“We needed to do some calibration, however I used to be shocked by how little work we needed to do to realize such excessive accuracy out of the field. We have been capable of get commercially related accuracy,” provides Hamerly.

Shifting ahead, the researchers need to iterate on the good transceiver chip to realize even higher efficiency. Additionally they need to miniaturize the receiver, which is presently the dimensions of a shoe field, all the way down to the dimensions of a single chip so it might match onto a wise machine like a mobile phone.

“Utilizing photonics and light-weight as a platform for computing is a very thrilling space of analysis with doubtlessly enormous implications on the pace and effectivity of our info know-how panorama,” says Euan Allen, a Royal Academy of Engineering Analysis Fellow on the College of Tub, who was not concerned with this work. “The work of Sludds et al. is an thrilling step towards seeing real-world implementations of such units, introducing a brand new and sensible edge-computing scheme while additionally exploring a number of the elementary limitations of computation at very low (single-photon) mild ranges.”

The analysis is funded, partially, by NTT Analysis, the Nationwide Science Basis, the Air Power Workplace of Scientific Analysis, the Air Power Analysis Laboratory, and the Military Analysis Workplace.