Right now we’re sharing publicly Microsoft’s Accountable AI Customary, a framework to information how we construct AI programs. It is a vital step in our journey to develop higher, extra reliable AI. We’re releasing our newest Accountable AI Customary to share what we’ve got realized, invite suggestions from others, and contribute to the dialogue about constructing higher norms and practices round AI.

Guiding product improvement in direction of extra accountable outcomes

AI programs are the product of many various selections made by those that develop and deploy them. From system objective to how individuals work together with AI programs, we have to proactively information these selections towards extra useful and equitable outcomes. Meaning maintaining individuals and their objectives on the heart of system design selections and respecting enduring values like equity, reliability and security, privateness and safety, inclusiveness, transparency, and accountability.

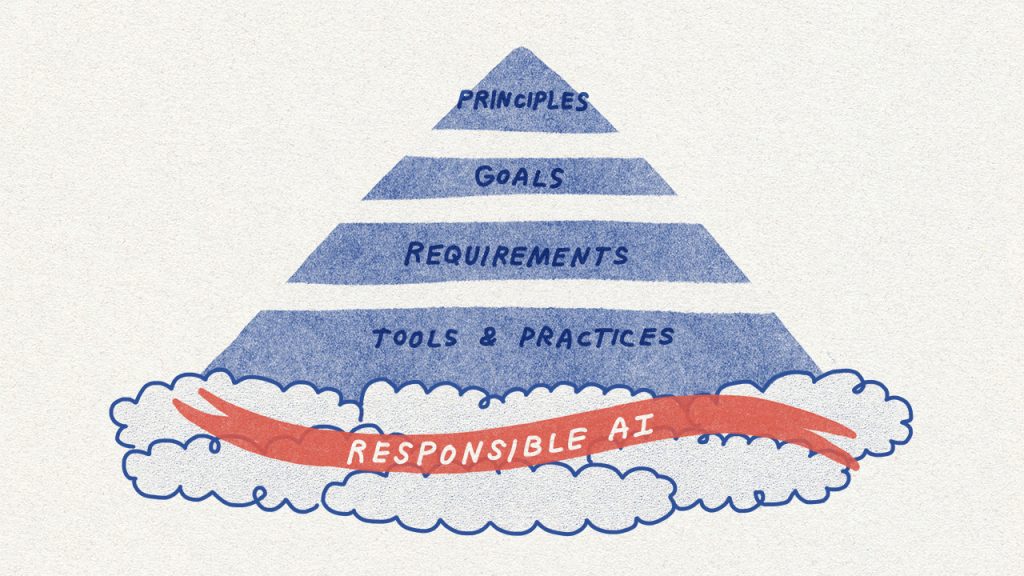

The Accountable AI Customary units out our greatest pondering on how we are going to construct AI programs to uphold these values and earn society’s belief. It gives particular, actionable steerage for our groups that goes past the high-level ideas which have dominated the AI panorama up to now.

The Customary particulars concrete objectives or outcomes that groups growing AI programs should attempt to safe. These objectives assist break down a broad precept like ‘accountability’ into its key enablers, akin to impression assessments, knowledge governance, and human oversight. Every purpose is then composed of a set of necessities, that are steps that groups should take to make sure that AI programs meet the objectives all through the system lifecycle. Lastly, the Customary maps out there instruments and practices to particular necessities in order that Microsoft’s groups implementing it have sources to assist them succeed.

The necessity for this kind of sensible steerage is rising. AI is turning into increasingly more part of our lives, and but, our legal guidelines are lagging behind. They haven’t caught up with AI’s distinctive dangers or society’s wants. Whereas we see indicators that authorities motion on AI is increasing, we additionally acknowledge our duty to behave. We consider that we have to work in direction of guaranteeing AI programs are accountable by design.

Refining our coverage and studying from our product experiences

Over the course of a yr, a multidisciplinary group of researchers, engineers, and coverage specialists crafted the second model of our Accountable AI Customary. It builds on our earlier accountable AI efforts, together with the primary model of the Customary that launched internally within the fall of 2019, in addition to the most recent analysis and a few vital classes realized from our personal product experiences.

Equity in Speech-to-Textual content Know-how

The potential of AI programs to exacerbate societal biases and inequities is among the most widely known harms related to these programs. In March 2020, an instructional research revealed that speech-to-text know-how throughout the tech sector produced error charges for members of some Black and African American communities that had been practically double these for white customers. We stepped again, thought of the research’s findings, and realized that our pre-release testing had not accounted satisfactorily for the wealthy variety of speech throughout individuals with totally different backgrounds and from totally different areas. After the research was revealed, we engaged an knowledgeable sociolinguist to assist us higher perceive this variety and sought to increase our knowledge assortment efforts to slim the efficiency hole in our speech-to-text know-how. Within the course of, we discovered that we would have liked to grapple with difficult questions on how finest to gather knowledge from communities in a manner that engages them appropriately and respectfully. We additionally realized the worth of bringing specialists into the method early, together with to higher perceive elements which may account for variations in system efficiency.

The Accountable AI Customary information the sample we adopted to enhance our speech-to-text know-how. As we proceed to roll out the Customary throughout the corporate, we anticipate the Equity Targets and Necessities recognized in it would assist us get forward of potential equity harms.

Acceptable Use Controls for Customized Neural Voice and Facial Recognition

Azure AI’s Customized Neural Voice is one other modern Microsoft speech know-how that permits the creation of an artificial voice that sounds practically similar to the unique supply. AT&T has introduced this know-how to life with an award-winning in-store Bugs Bunny expertise, and Progressive has introduced Flo’s voice to on-line buyer interactions, amongst makes use of by many different clients. This know-how has thrilling potential in schooling, accessibility, and leisure, and but additionally it is straightforward to think about the way it may very well be used to inappropriately impersonate audio system and deceive listeners.

Our assessment of this know-how via our Accountable AI program, together with the Delicate Makes use of assessment course of required by the Accountable AI Customary, led us to undertake a layered management framework: we restricted buyer entry to the service, ensured acceptable use circumstances had been proactively outlined and communicated via a Transparency Observe and Code of Conduct, and established technical guardrails to assist make sure the energetic participation of the speaker when creating an artificial voice. By way of these and different controls, we helped defend in opposition to misuse, whereas sustaining useful makes use of of the know-how.

Constructing upon what we realized from Customized Neural Voice, we are going to apply related controls to our facial recognition providers. After a transition interval for present clients, we’re limiting entry to those providers to managed clients and companions, narrowing the use circumstances to pre-defined acceptable ones, and leveraging technical controls engineered into the providers.

Match for Function and Azure Face Capabilities

Lastly, we acknowledge that for AI programs to be reliable, they should be applicable options to the issues they’re designed to resolve. As a part of our work to align our Azure Face service to the necessities of the Accountable AI Customary, we’re additionally retiring capabilities that infer emotional states and identification attributes akin to gender, age, smile, facial hair, hair, and make-up.

Taking emotional states for example, we’ve got determined we is not going to present open-ended API entry to know-how that may scan individuals’s faces and purport to deduce their emotional states primarily based on their facial expressions or actions. Consultants inside and outdoors the corporate have highlighted the shortage of scientific consensus on the definition of “feelings,” the challenges in how inferences generalize throughout use circumstances, areas, and demographics, and the heightened privateness issues round this kind of functionality. We additionally determined that we have to fastidiously analyze all AI programs that purport to deduce individuals’s emotional states, whether or not the programs use facial evaluation or another AI know-how. The Match for Function Aim and Necessities within the Accountable AI Customary now assist us to make system-specific validity assessments upfront, and our Delicate Makes use of course of helps us present nuanced steerage for high-impact use circumstances, grounded in science.

These real-world challenges knowledgeable the event of Microsoft’s Accountable AI Customary and reveal its impression on the way in which we design, develop, and deploy AI programs.

For these eager to dig into our strategy additional, we’ve got additionally made out there some key sources that help the Accountable AI Customary: our Affect Evaluation template and information, and a set of Transparency Notes. Affect Assessments have confirmed invaluable at Microsoft to make sure groups discover the impression of their AI system – together with its stakeholders, supposed advantages, and potential harms – in depth on the earliest design levels. Transparency Notes are a brand new type of documentation wherein we speak in confidence to our clients the capabilities and limitations of our core constructing block applied sciences, so that they have the information essential to make accountable deployment decisions.

A multidisciplinary, iterative journey

Our up to date Accountable AI Customary displays a whole lot of inputs throughout Microsoft applied sciences, professions, and geographies. It’s a vital step ahead for our observe of accountable AI as a result of it’s far more actionable and concrete: it units out sensible approaches for figuring out, measuring, and mitigating harms forward of time, and requires groups to undertake controls to safe useful makes use of and guard in opposition to misuse. You possibly can study extra in regards to the improvement of the Customary on this

Whereas our Customary is a vital step in Microsoft’s accountable AI journey, it is only one step. As we make progress with implementation, we anticipate to come across challenges that require us to pause, replicate, and modify. Our Customary will stay a dwelling doc, evolving to deal with new analysis, applied sciences, legal guidelines, and learnings from inside and outdoors the corporate.

There’s a wealthy and energetic world dialog about the right way to create principled and actionable norms to make sure organizations develop and deploy AI responsibly. We’ve got benefited from this dialogue and can proceed to contribute to it. We consider that trade, academia, civil society, and authorities have to collaborate to advance the state-of-the-art and study from each other. Collectively, we have to reply open analysis questions, shut measurement gaps, and design new practices, patterns, sources, and instruments.

Higher, extra equitable futures would require new guardrails for AI. Microsoft’s Accountable AI Customary is one contribution towards this purpose, and we’re participating within the arduous and crucial implementation work throughout the corporate. We’re dedicated to being open, sincere, and clear in our efforts to make significant progress.