As computing and AI developments spanning a long time are enabling unbelievable alternatives for individuals and society, they’re additionally elevating questions on accountable improvement and deployment. For instance, the machine studying fashions powering AI programs might not carry out the identical for everybody or each situation, doubtlessly resulting in harms associated to security, reliability, and equity. Single metrics typically used to symbolize mannequin functionality, reminiscent of total accuracy, do little to display beneath which circumstances or for whom failure is extra possible; in the meantime, widespread approaches to addressing failures, like including extra information and compute or rising mannequin dimension, don’t get to the basis of the issue. Plus, these blanket trial-and-error approaches may be useful resource intensive and financially pricey.

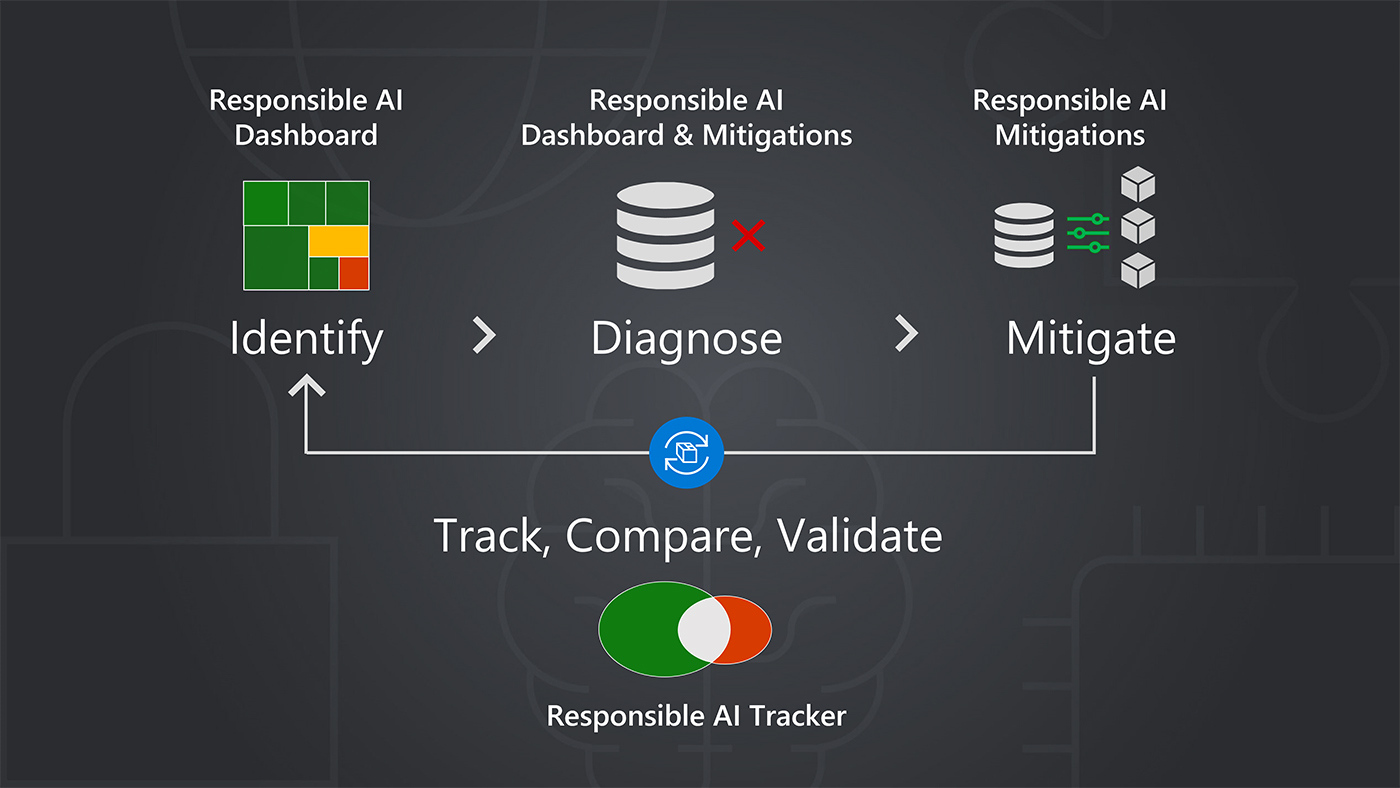

By means of its Accountable AI Toolbox, a set of instruments and functionalities designed to assist practitioners maximize the advantages of AI programs whereas mitigating harms, and different efforts for accountable AI, Microsoft gives another: a principled strategy to AI improvement centered round focused mannequin enchancment. Enhancing fashions by means of concentrating on strategies goals to determine options tailor-made to the causes of particular failures. This can be a important a part of a mannequin enchancment life cycle that not solely contains the identification, prognosis, and mitigation of failures but in addition the monitoring, comparability, and validation of mitigation choices. The strategy helps practitioners in higher addressing failures with out introducing new ones or eroding different elements of mannequin efficiency.

“With focused mannequin enchancment, we’re making an attempt to encourage a extra systematic course of for bettering machine studying in analysis and follow,” says Besmira Nushi, a Microsoft Principal Researcher concerned with the event of instruments for supporting accountable AI. She is a member of the analysis crew behind the toolbox’s latest additions: the Accountable AI Mitigations Library, which allows practitioners to extra simply experiment with completely different methods for addressing failures, and the Accountable AI Tracker, which makes use of visualizations to indicate the effectiveness of the completely different methods for extra knowledgeable decision-making.

Focused mannequin enchancment: From identification to validation

The instruments within the Accountable AI Toolbox, accessible in open supply and thru the Azure Machine Studying platform provided by Microsoft, have been designed with every stage of the mannequin enchancment life cycle in thoughts, informing focused mannequin enchancment by means of error evaluation, equity evaluation, information exploration, and interpretability.

For instance, the brand new mitigations library bolsters mitigation by providing a way of managing failures that happen in information preprocessing, reminiscent of these brought on by a scarcity of information or lower-quality information for a specific subset. For monitoring, comparability, and validation, the brand new tracker brings mannequin, code, visualizations, and different improvement elements collectively for easy-to-follow documentation of mitigation efforts. The tracker’s fundamental function is disaggregated mannequin analysis and comparability, which breaks down mannequin efficiency by information subset to current a clearer image of a mitigation’s results on the meant subset, in addition to different subsets, serving to to uncover hidden efficiency declines earlier than fashions are deployed and utilized by people and organizations. Moreover, the tracker permits practitioners to have a look at efficiency for subsets of information throughout iterations of a mannequin to assist practitioners decide probably the most applicable mannequin for deployment.

“Information scientists might construct most of the functionalities that we provide with these instruments; they might construct their very own infrastructure,” says Nushi. “However to do this for each undertaking requires a number of time and effort. The good thing about these instruments is scale. Right here, they will speed up their work with instruments that apply to a number of eventualities, liberating them as much as give attention to the work of constructing extra dependable, reliable fashions.”

Besmira Nushi, Microsoft Principal Researcher

Constructing instruments for accountable AI which are intuitive, efficient, and helpful will help practitioners contemplate potential harms and their mitigation from the start when growing a brand new mannequin. The end result may be extra confidence that the work they’re doing is supporting AI that’s safer, fairer, and extra dependable as a result of it was designed that method, says Nushi. The advantages of utilizing these instruments may be far-reaching—from contributing to AI programs that extra pretty assess candidates for loans by having comparable accuracy throughout demographic teams to site visitors signal detectors in self-driving vehicles that may carry out higher throughout situations like solar, snow, and rain.

Creating instruments that may have the influence researchers like Nushi envision typically begins with a analysis query and includes changing the ensuing work into one thing individuals and groups can readily and confidently incorporate of their workflows.

“Making that bounce from a analysis paper’s code on GitHub to one thing that’s usable includes much more course of when it comes to understanding what’s the interplay that the information scientist would want, what would make them extra productive,” says Nushi. “In analysis, we give you many concepts. A few of them are too fancy, so fancy that they can’t be utilized in the actual world as a result of they can’t be operationalized.”

Multidisciplinary analysis groups consisting of consumer expertise researchers, designers, and machine studying and front-end engineers have helped floor the method as have the contributions of those that focus on all issues accountable AI. Microsoft Analysis works intently with the incubation crew of Aether, the advisory physique for Microsoft management on AI ethics and results, to create instruments based mostly on the analysis. Equally vital has been partnership with product groups whose mission is to operationalize AI responsibly, says Nushi. For Microsoft Analysis, that’s typically Azure Machine Studying, the Microsoft platform for end-to-end ML mannequin improvement. By means of this relationship, Azure Machine Studying can supply what Microsoft Principal PM Supervisor Mehrnoosh Sameki refers to as buyer “alerts,” basically a dependable stream of practitioner desires and wishes straight from practitioners on the bottom. And, Azure Machine Studying is simply as excited to leverage what Microsoft Analysis and Aether have to supply: cutting-edge science. The connection has been fruitful.

As the present Azure Machine Studying platform made its debut 5 years in the past, it was clear tooling for accountable AI was going to be mandatory. Along with aligning with the Microsoft imaginative and prescient for AI improvement, prospects have been searching for out such sources. They approached the Azure Machine Studying crew with requests for explainability and interpretability options, sturdy mannequin validation strategies, and equity evaluation instruments, recounts Sameki, who leads the Azure Machine Studying crew accountable for tooling for accountable AI. Microsoft Analysis, Aether, and Azure Machine Studying teamed as much as combine instruments for accountable AI into the platform, together with InterpretML for understanding mannequin conduct, Error Evaluation for figuring out information subsets for which failures are extra possible, and Fairlearn for assessing and mitigating fairness-related points. InterpretML and Fairlearn are impartial community-driven tasks that energy a number of Accountable AI Toolbox functionalities.

Earlier than lengthy, Azure Machine Studying approached Microsoft Analysis with one other sign: prospects needed to make use of the instruments collectively, in a single interface. The analysis crew responded with an strategy that enabled interoperability, permitting the instruments to trade information and insights, facilitating a seamless ML debugging expertise. Over the course of two to 3 months, the groups met weekly to conceptualize and design “a single pane of glass” from which practitioners might use the instruments collectively. As Azure Machine Studying developed the undertaking, Microsoft Analysis stayed concerned, from offering design experience to contributing to how the story and capabilities of what had change into Accountable AI dashboard could be communicated to prospects.

After the discharge, the groups dived into the subsequent open problem: enabling practitioners to higher mitigate failures. Enter the Accountable AI Mitigations Library and the Accountable AI Tracker, which have been developed by Microsoft Analysis in collaboration with Aether. Microsoft Analysis was well-equipped with the sources and experience to determine the simplest visualizations for doing disaggregated mannequin comparability (there was little or no earlier work accessible on it) and navigating the correct abstractions for the complexities of making use of completely different mitigations to completely different subsets of information with a versatile, easy-to-use interface. All through the method, the Azure crew supplied perception into how the brand new instruments match into the present infrastructure.

With the Azure crew bringing practitioner wants and the platform to the desk and analysis bringing the most recent in mannequin analysis, accountable testing, and the like, it’s the good match, says Sameki.

Whereas making these instruments accessible by means of Azure Machine Studying helps prospects in bringing their services and products to market responsibly, making these instruments open supply is vital to cultivating an excellent bigger panorama of responsibly developed AI. When launch prepared, these instruments for accountable AI are made open supply after which built-in into the Azure Machine Studying platform. The explanations for going with an open-source-first strategy are quite a few, say Nushi and Sameki:

- freely accessible instruments for accountable AI are an academic useful resource for studying and instructing the follow of accountable AI;

- extra contributors, each inner to Microsoft and exterior, add high quality, longevity, and pleasure to the work and matter; and

- the power to combine them into any platform or infrastructure encourages extra widespread use.

The choice additionally represents one of many Microsoft AI ideas in motion—transparency.

“Within the area of accountable AI, being as open as potential is the best way to go, and there are a number of causes for that,” says Sameki. “The principle cause is for constructing belief with the customers and with the shoppers of those instruments. In my view, nobody would belief a machine studying analysis method or an unfairness mitigation algorithm that’s unclear and shut supply. Additionally, this area may be very new. Innovating within the open nurtures higher collaborations within the area.”

Mehrnoosh Sameki, Microsoft Principal PM Supervisor

Trying forward

AI capabilities are solely advancing. The bigger analysis group, practitioners, the tech trade, authorities, and different establishments are working in several methods to steer these developments in a path by which AI is contributing worth and its potential harms are minimized. Practices for accountable AI might want to proceed to evolve with AI developments to help these efforts.

For Microsoft researchers like Nushi and product managers like Sameki, meaning fostering cross-company, multidisciplinary collaborations of their continued improvement of instruments that encourage focused mannequin enchancment guided by the step-by-step technique of identification, prognosis, mitigation, and comparability and validation—wherever these advances lead.

“As we get higher on this, I hope we transfer towards a extra systematic course of to grasp what information is definitely helpful, even for the big fashions; what’s dangerous that basically shouldn’t be included in these; and what’s the information that has a number of moral points if you happen to embrace it,” says Nushi. “Constructing AI responsibly is crosscutting, requiring views and contributions from inner groups and exterior practitioners. Our rising assortment of instruments exhibits that efficient collaboration has the potential to influence—for the higher—how we create the brand new era of AI programs.”