An essential facet of human imaginative and prescient is our capacity to understand 3D form from the 2D photos we observe. Reaching this sort of understanding with laptop imaginative and prescient methods has been a elementary problem within the subject. Many profitable approaches depend on multi-view information, the place two or extra photos of the identical scene can be found from totally different views, which makes it a lot simpler to deduce the 3D form of objects within the photos.

There are, nonetheless, many conditions the place it will be helpful to know 3D construction from a single picture, however this drawback is usually tough or unattainable to resolve. For instance, it isn’t essentially attainable to inform the distinction between a picture of an precise seaside and a picture of a flat poster of the identical seaside. Nonetheless it’s attainable to estimate 3D construction based mostly on what sort of 3D objects happen generally and what related buildings appear like from totally different views.

In “LOLNeRF: Be taught from One Look”, introduced at CVPR 2022, we suggest a framework that learns to mannequin 3D construction and look from collections of single-view photos. LOLNeRF learns the standard 3D construction of a category of objects, akin to automobiles, human faces or cats, however solely from single views of anybody object, by no means the identical object twice. We construct our strategy by combining Generative Latent Optimization (GLO) and neural radiance fields (NeRF) to realize state-of-the-art outcomes for novel view synthesis and aggressive outcomes for depth estimation.

Combining GLO and NeRF

GLO is a basic technique that learns to reconstruct a dataset (akin to a set of 2D photos) by co-learning a neural community (decoder) and desk of codes (latents) that can also be an enter to the decoder. Every of those latent codes re-creates a single ingredient (akin to a picture) from the dataset. As a result of the latent codes have fewer dimensions than the information components themselves, the community is pressured to generalize, studying widespread construction within the information (akin to the overall form of canine snouts).

NeRF is a method that is superb at reconstructing a static 3D object from 2D photos. It represents an object with a neural community that outputs coloration and density for every level in 3D area. Shade and density values are collected alongside rays, one ray for every pixel in a 2D picture. These are then mixed utilizing normal laptop graphics quantity rendering to compute a remaining pixel coloration. Importantly, all these operations are differentiable, permitting for end-to-end supervision. By implementing that every rendered pixel (of the 3D illustration) matches the colour of floor fact (2D) pixels, the neural community creates a 3D illustration that may be rendered from any viewpoint.

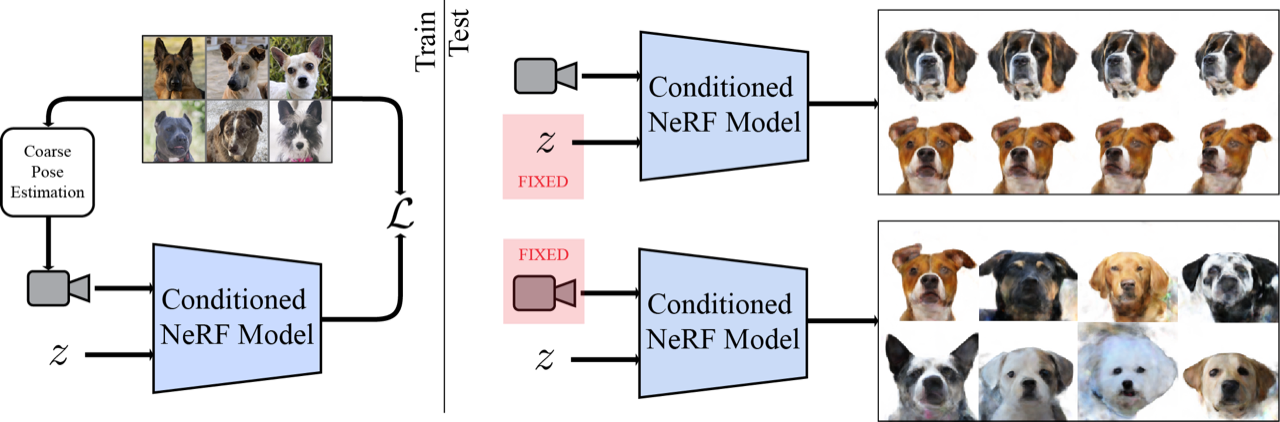

We mix NeRF with GLO by assigning every object a latent code and concatenating it with normal NeRF inputs, giving it the power to reconstruct a number of objects. Following GLO, we co-optimize these latent codes together with community weights throughout coaching to reconstruct the enter photos. In contrast to normal NeRF, which requires a number of views of the identical object, we supervise our technique with solely single views of anybody object (however a number of examples of that kind of object). As a result of NeRF is inherently 3D, we will then render the item from arbitrary viewpoints. Combining NeRF with GLO offers it the power to study widespread 3D construction throughout cases from solely single views whereas nonetheless retaining the power to recreate particular cases of the dataset.

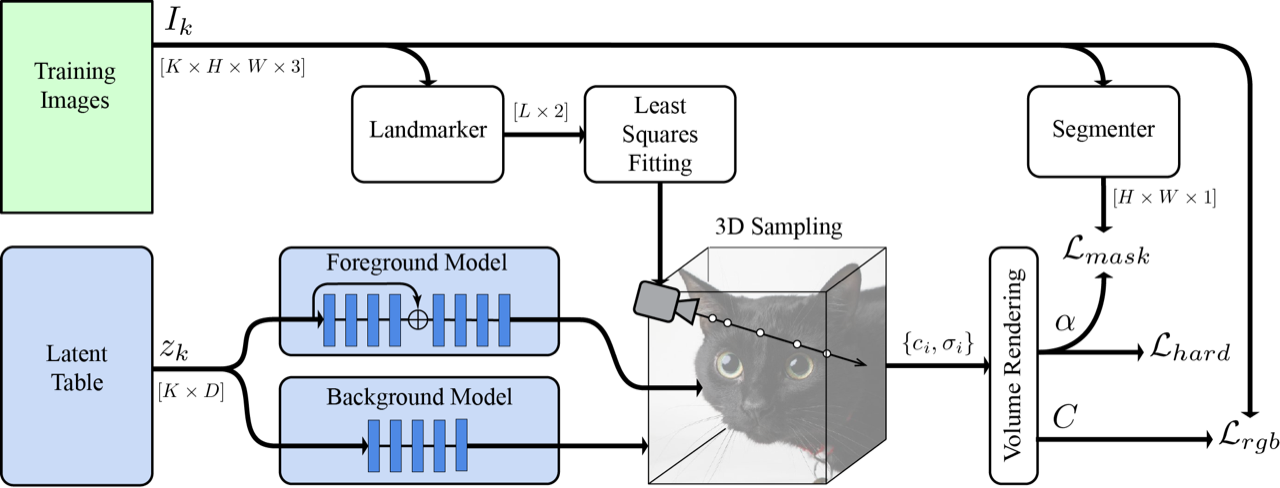

Digital camera Estimation

To ensure that NeRF to work, it must know the precise digicam location, relative to the item, for every picture. Until this was measured when the picture was taken, it’s usually unknown. As a substitute, we use the MediaPipe Face Mesh to extract 5 landmark areas from the photographs. Every of those 2D predictions correspond to a semantically constant level on the item (e.g., the tip of the nostril or corners of the eyes). We are able to then derive a set of canonical 3D areas for the semantic factors, together with estimates of the digicam poses for every picture, such that the projection of the canonical factors into the photographs is as constant as attainable with the 2D landmarks.

Laborious Floor and Masks Losses

Normal NeRF is efficient for precisely reproducing the photographs, however in our single-view case, it tends to provide photos that look blurry when considered off-axis. To deal with this, we introduce a novel onerous floor loss, which inspires the density to undertake sharp transitions from exterior to inside areas, decreasing blurring. This primarily tells the community to create “stable” surfaces, and never semi-transparent ones like clouds.

We additionally obtained higher outcomes by splitting the community into separate foreground and background networks. We supervised this separation with a masks from the MediaPipe Selfie Segmenter and a loss to encourage community specialization. This enables the foreground community to specialize solely on the item of curiosity, and never get “distracted” by the background, rising its high quality.

Outcomes

We surprisingly discovered that becoming solely 5 key factors gave correct sufficient digicam estimates to coach a mannequin for cats, canine, or human faces. Because of this given solely a single view of your loved one cats Schnitzel, Widget and buddies, you’ll be able to create a brand new picture from every other angle.

|

| High: instance cat photos from AFHQ. Backside: A synthesis of novel 3D views created by LOLNeRF. |

Conclusion

We’ve developed a method that’s efficient at discovering 3D construction from single 2D photos. We see nice potential in LOLNeRF for quite a lot of functions and are presently investigating potential use-cases.

|

| Interpolation of feline identities from linear interpolation of discovered latent codes for various examples in AFHQ. |

Code Launch

We acknowledge the potential for misuse and significance of appearing responsibly. To that finish, we are going to solely launch the code for reproducibility functions, however is not going to launch any educated generative fashions.

Acknowledgements

We wish to thank Andrea Tagliasacchi, Kwang Moo Yi, Viral Carpenter, David Fleet, Danica Matthews, Florian Schroff, Hartwig Adam and Dmitry Lagun for steady assist in constructing this know-how.