Robotic studying has been utilized to a variety of difficult actual world duties, together with dexterous manipulation, legged locomotion, and greedy. It’s much less frequent to see robotic studying utilized to dynamic, high-acceleration duties requiring tight-loop human-robot interactions, similar to desk tennis. There are two complementary properties of the desk tennis job that make it attention-grabbing for robotic studying analysis. First, the duty requires each velocity and precision, which places important calls for on a studying algorithm. On the similar time, the issue is highly-structured (with a set, predictable setting) and naturally multi-agent (the robotic can play with people or one other robotic), making it a fascinating testbed to research questions on human-robot interplay and reinforcement studying. These properties have led to a number of analysis teams growing desk tennis analysis platforms [1, 2, 3, 4].

The Robotics workforce at Google has constructed such a platform to review issues that come up from robotic studying in a multi-player, dynamic and interactive setting. In the remainder of this publish we introduce two initiatives, Iterative-Sim2Real (to be introduced at CoRL 2022) and GoalsEye (IROS 2022), which illustrate the issues we have now been investigating to date. Iterative-Sim2Real allows a robotic to carry rallies of over 300 hits with a human participant, whereas GoalsEye allows studying goal-conditioned insurance policies that match the precision of novice people.

| Iterative-Sim2Real insurance policies taking part in cooperatively with people (prime) and a GoalsEye coverage returning balls to completely different places (backside). |

Iterative-Sim2Real: Leveraging a Simulator to Play Cooperatively with People

On this venture, the objective for the robotic is cooperative in nature: to hold out a rally with a human for so long as attainable. Since it will be tedious and time-consuming to coach straight in opposition to a human participant in the actual world, we undertake a simulation-based (i.e., sim-to-real) strategy. Nonetheless, as a result of it’s tough to simulate human habits precisely, making use of sim-to-real studying to duties that require tight, close-loop interplay with a human participant is tough.

In Iterative-Sim2Real, (i.e., i-S2R), we current a way for studying human habits fashions for human-robot interplay duties, and instantiate it on our robotic desk tennis platform. We have now constructed a system that may obtain rallies of as much as 340 hits with an novice human participant (proven under).

| A 340-hit rally lasting over 4 minutes. |

Studying Human Habits Fashions: a Hen and Egg Drawback

The central drawback in studying correct human habits fashions for robotics is the next: if we should not have a good-enough robotic coverage to start with, then we can’t gather high-quality information on how an individual would possibly work together with the robotic. However and not using a human habits mannequin, we can’t get hold of robotic insurance policies within the first place. An alternate can be to coach a robotic coverage straight in the actual world, however that is usually sluggish, cost-prohibitive, and poses safety-related challenges, that are additional exacerbated when persons are concerned. i-S2R, visualized under, is an answer to this rooster and egg drawback. It makes use of a easy mannequin of human habits as an approximate place to begin and alternates between coaching in simulation and deploying in the actual world. In every iteration, each the human habits mannequin and the coverage are refined.

|

| i-S2R Methodology. |

Outcomes

To judge i-S2R, we repeated the coaching course of 5 occasions with 5 completely different human opponents and in contrast it with a baseline strategy of unusual sim-to-real plus fine-tuning (S2R+FT). When aggregated throughout all gamers, the i-S2R rally size is larger than S2R+FT by about 9% (under on the left). The histogram of rally lengths for i-S2R and S2R+FT (under on the proper) reveals that a big fraction of the rallies for S2R+FT are shorter (i.e., lower than 5), whereas i-S2R achieves longer rallies extra continuously.

|

| Abstract of i-S2R outcomes. Boxplot particulars: The white circle is the imply, the horizontal line is the median, field bounds are the twenty fifth and seventy fifth percentiles. |

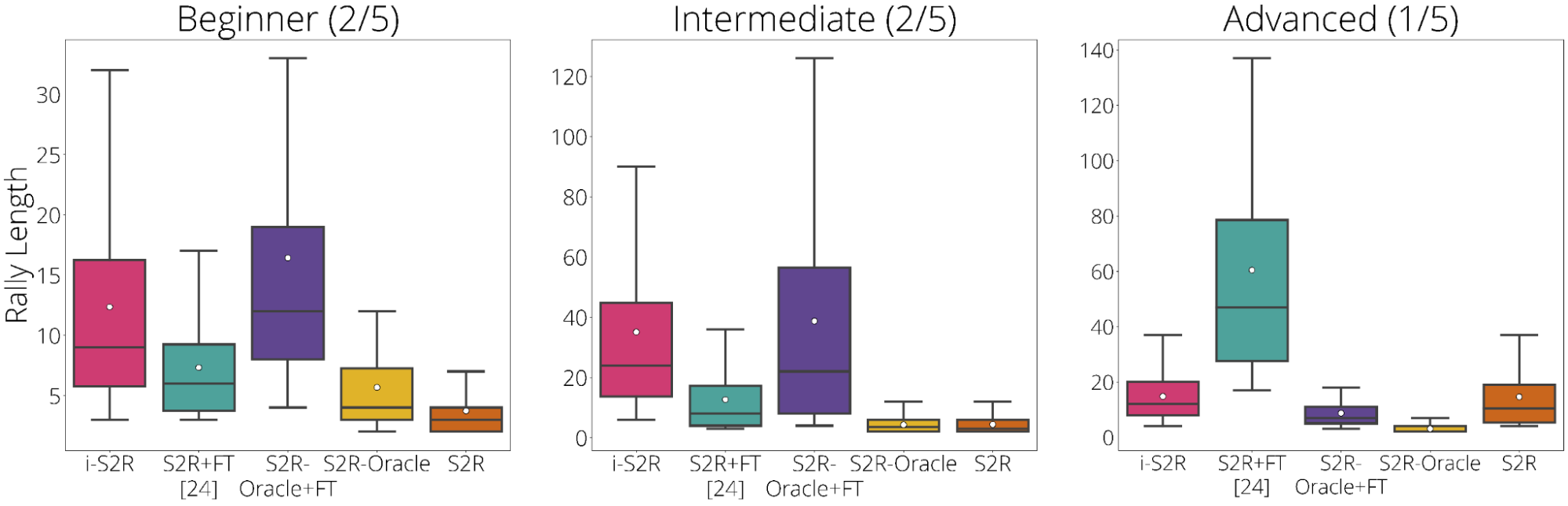

We additionally break down the outcomes primarily based on participant kind: newbie (40% gamers), intermediate (40% of gamers) and superior (20% gamers). We see that i-S2R considerably outperforms S2R+FT for each newbie and intermediate gamers (80% of gamers).

|

| i-S2R Outcomes by participant kind. |

Extra particulars on i-S2R may be discovered on our preprint, web site, and likewise within the following abstract video.

GoalsEye: Studying to Return Balls Exactly on a Bodily Robotic

Whereas we targeted on sim-to-real studying in i-S2R, it’s generally fascinating to study utilizing solely real-world information — closing the sim-to-real hole on this case is pointless. Imitation studying (IL) gives a easy and secure strategy to studying in the actual world, nevertheless it requires entry to demonstrations and can’t exceed the efficiency of the instructor. Amassing skilled human demonstrations of exact goal-targeting in excessive velocity settings is difficult and generally not possible (resulting from restricted precision in human actions). Whereas reinforcement studying (RL) is well-suited to such high-speed, high-precision duties, it faces a tough exploration drawback (particularly at first), and may be very pattern inefficient. In GoalsEye, we show an strategy that mixes latest habits cloning methods [5, 6] to study a exact goal-targeting coverage, ranging from a small, weakly-structured, non-targeting dataset.

Right here we contemplate a unique desk tennis job with an emphasis on precision. We would like the robotic to return the ball to an arbitrary objective location on the desk, e.g. “hit the again left nook” or ”land the ball simply over the web on the proper facet” (see left video under). Additional, we wished to discover a methodology that may be utilized straight on our actual world desk tennis setting with no simulation concerned. We discovered that the synthesis of two present imitation studying methods, Studying from Play (LFP) and Aim-Conditioned Supervised Studying (GCSL), scales to this setting. It’s protected and pattern environment friendly sufficient to coach a coverage on a bodily robotic which is as correct as novice people on the job of returning balls to particular targets on the desk.

| GoalsEye coverage aiming at a 20cm diameter objective (left). Human participant aiming on the similar objective (proper). |

The important elements of success are:

- A minimal, however non-goal-directed “bootstrap” dataset of the robotic hitting the ball to beat an preliminary tough exploration drawback.

- Hindsight relabeled objective conditioned behavioral cloning (GCBC) to coach a goal-directed coverage to achieve any objective within the dataset.

- Iterative self-supervised objective reaching. The agent improves repeatedly by setting random targets and making an attempt to achieve them utilizing the present coverage. All makes an attempt are relabeled and added right into a repeatedly increasing coaching set. This self-practice, by which the robotic expands the coaching information by setting and making an attempt to achieve targets, is repeated iteratively.

|

| GoalsEye methodology. |

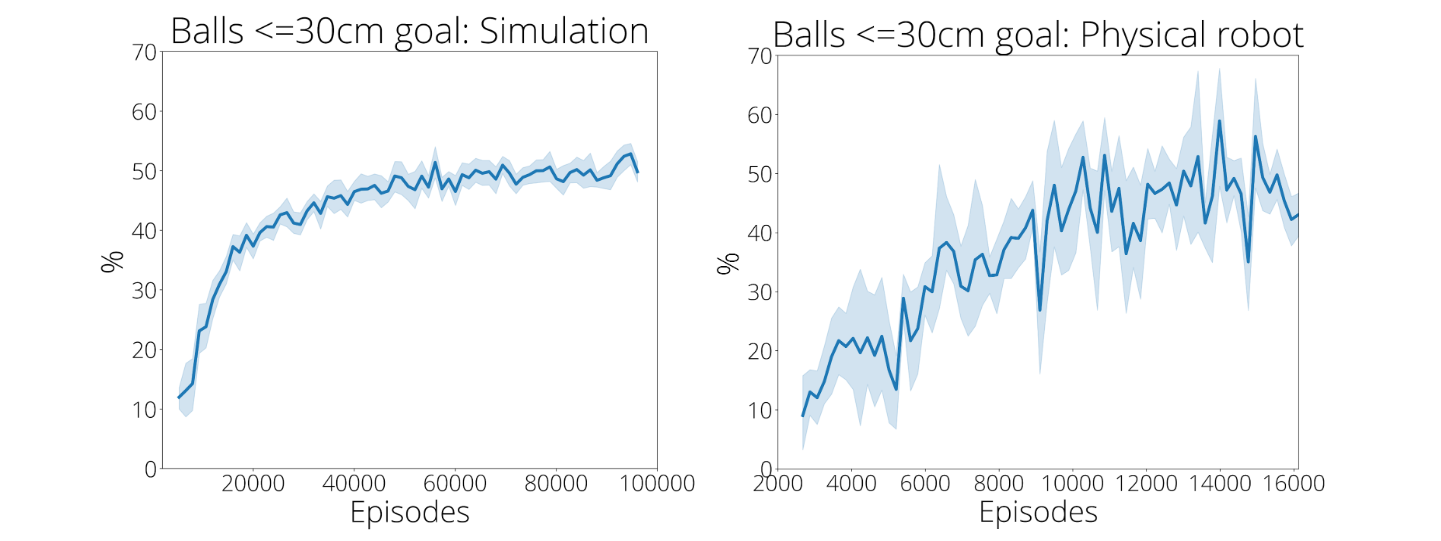

Demonstrations and Self-Enchancment Via Follow Are Key

The synthesis of methods is essential. The coverage’s goal is to return a selection of incoming balls to any location on the opponent’s facet of the desk. A coverage educated on the preliminary 2,480 demonstrations solely precisely reaches inside 30 cm of the objective 9% of the time. Nonetheless, after a coverage has self-practiced for ~13,500 makes an attempt, goal-reaching accuracy rises to 43% (under on the proper). This enchancment is clearly seen as proven within the movies under. But if a coverage solely self-practices, coaching fails fully on this setting. Apparently, the variety of demonstrations improves the effectivity of subsequent self-practice, albeit with diminishing returns. This means that demonstration information and self-practice might be substituted relying on the relative time and price to assemble demonstration information in contrast with self-practice.

|

| Self-practice considerably improves accuracy. Left: simulated coaching. Proper: actual robotic coaching. The demonstration datasets include ~2,500 episodes, each in simulation and the actual world. |

| Visualizing the advantages of self-practice. Left: coverage educated on preliminary 2,480 demonstrations. Proper: coverage after an extra 13,500 self-practice makes an attempt. |

Extra particulars on GoalsEye may be discovered within the preprint and on our web site.

Conclusion and Future Work

We have now introduced two complementary initiatives utilizing our robotic desk tennis analysis platform. i-S2R learns RL insurance policies which might be capable of work together with people, whereas GoalsEye demonstrates that studying from real-world unstructured information mixed with self-supervised observe is efficient for studying goal-conditioned insurance policies in a exact, dynamic setting.

One attention-grabbing analysis route to pursue on the desk tennis platform can be to construct a robotic “coach” that would adapt its play model in accordance with the ability stage of the human participant to maintain issues difficult and thrilling.

Acknowledgements

We thank our co-authors, Saminda Abeyruwan, Alex Bewley, Krzysztof Choromanski, David B. D’Ambrosio, Tianli Ding, Deepali Jain, Corey Lynch, Pannag R. Sanketi, Pierre Sermanet and Anish Shankar. We’re additionally grateful for the help of many members of the Robotics Workforce who’re listed within the acknowledgement sections of the papers.