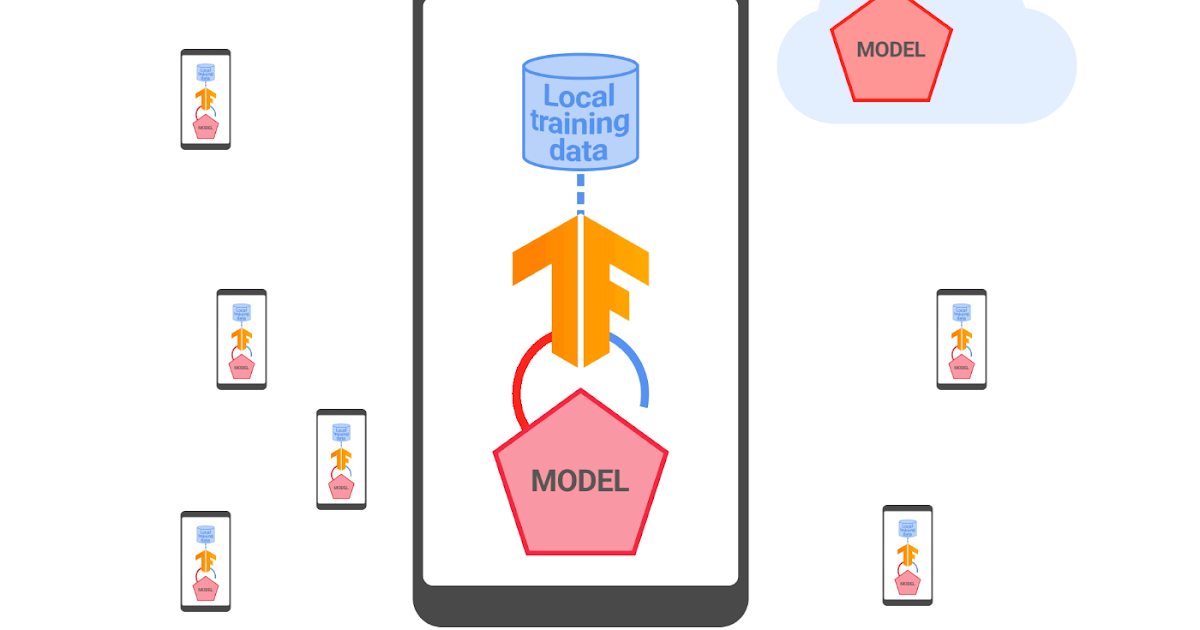

Federated studying is a distributed means of coaching machine studying (ML) fashions the place information is regionally processed and solely centered mannequin updates and metrics which might be meant for quick aggregation are shared with a server that orchestrates coaching. This enables the coaching of fashions on regionally accessible indicators with out exposing uncooked information to servers, growing person privateness. In 2021, we introduced that we’re utilizing federated studying to coach Sensible Textual content Choice fashions, an Android function that helps customers choose and duplicate textual content simply by predicting what textual content they wish to choose after which mechanically increasing the choice for them.

Since that launch, now we have labored to enhance the privateness ensures of this know-how by rigorously combining safe aggregation (SecAgg) and a distributed model of differential privateness. On this publish, we describe how we constructed and deployed the primary federated studying system that gives formal privateness ensures to all person information earlier than it turns into seen to an honest-but-curious server, that means a server that follows the protocol however might attempt to achieve insights about customers from information it receives. The Sensible Textual content Choice fashions educated with this technique have diminished memorization by greater than two-fold, as measured by customary empirical testing strategies.

Scaling safe aggregation

Information minimization is a vital privateness precept behind federated studying. It refers to centered information assortment, early aggregation, and minimal information retention required throughout coaching. Whereas each machine collaborating in a federated studying spherical computes a mannequin replace, the orchestrating server is simply all in favour of their common. Subsequently, in a world that optimizes for information minimization, the server would study nothing about particular person updates and solely obtain an mixture mannequin replace. That is exactly what the SecAgg protocol achieves, below rigorous cryptographic ensures.

Essential to this work, two current developments have improved the effectivity and scalability of SecAgg at Google:

- An improved cryptographic protocol: Till lately, a big bottleneck in SecAgg was shopper computation, because the work required on every machine scaled linearly with the entire variety of purchasers (N) collaborating within the spherical. Within the new protocol, shopper computation now scales logarithmically in N. This, together with comparable features in server prices, leads to a protocol capable of deal with bigger rounds. Having extra customers take part in every spherical improves privateness, each empirically and formally.

- Optimized shopper orchestration: SecAgg is an interactive protocol, the place collaborating gadgets progress collectively. An vital function of the protocol is that it’s strong to some gadgets dropping out. If a shopper doesn’t ship a response in a predefined time window, then the protocol can proceed with out that shopper’s contribution. We’ve got deployed statistical strategies to successfully auto-tune such a time window in an adaptive means, leading to improved protocol throughput.

The above enhancements made it simpler and quicker to coach Sensible Textual content Choice with stronger information minimization ensures.

Aggregating every thing through safe aggregation

A typical federated coaching system not solely includes aggregating mannequin updates but additionally metrics that describe the efficiency of the native coaching. These are vital for understanding mannequin habits and debugging potential coaching points. In federated coaching for Sensible Textual content Choice, all mannequin updates and metrics are aggregated through SecAgg. This habits is statically asserted utilizing TensorFlow Federated, and regionally enforced in Android’s Non-public Compute Core safe setting. Because of this, this enhances privateness much more for customers coaching Sensible Textual content Choice, as a result of unaggregated mannequin updates and metrics will not be seen to any a part of the server infrastructure.

Differential privateness

SecAgg helps reduce information publicity, nevertheless it doesn’t essentially produce aggregates that assure towards revealing something distinctive to a person. That is the place differential privateness (DP) is available in. DP is a mathematical framework that units a restrict on a person’s affect on the end result of a computation, such because the parameters of a ML mannequin. That is achieved by bounding the contribution of any particular person person and including noise through the coaching course of to supply a chance distribution over output fashions. DP comes with a parameter (ε) that quantifies how a lot the distribution might change when including or eradicating the coaching examples of any particular person person (the smaller the higher).

Lately, we introduced a brand new methodology of federated coaching that enforces formal and meaningfully sturdy DP ensures in a centralized method, the place a trusted server controls the coaching course of. This protects towards exterior attackers who could try to investigate the mannequin. Nevertheless, this strategy nonetheless depends on belief within the central server. To offer even larger privateness protections, now we have created a system that makes use of distributed differential privateness (DDP) to implement DP in a distributed method, built-in inside the SecAgg protocol.

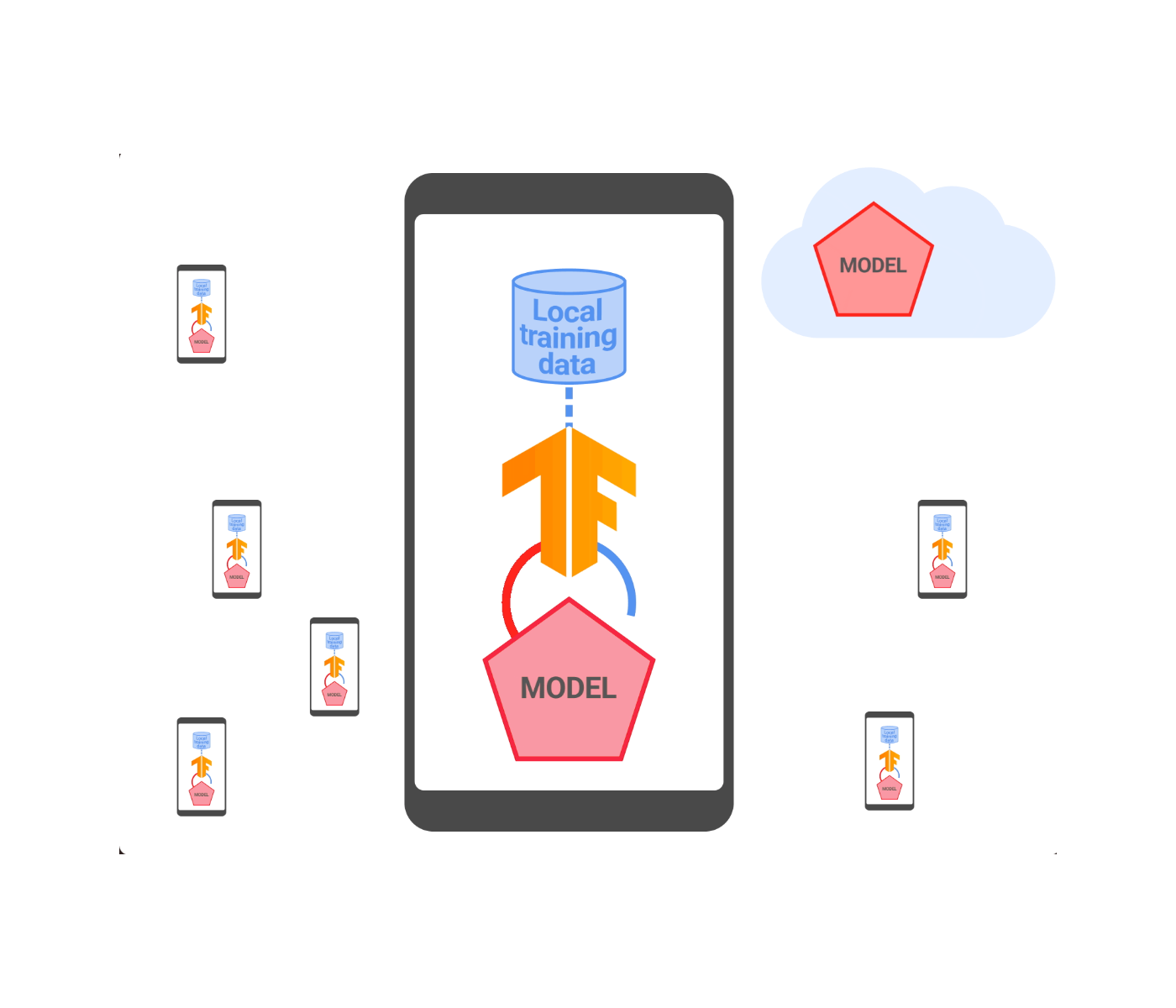

Distributed differential privateness

DDP is a know-how that provides DP ensures with respect to an honest-but-curious server coordinating coaching. It really works by having every collaborating machine clip and noise its replace regionally, after which aggregating these noisy clipped updates by means of the brand new SecAgg protocol described above. Because of this, the server solely sees the noisy sum of the clipped updates.

Nevertheless, the mix of native noise addition and use of SecAgg presents important challenges in apply:

- An improved discretization methodology: One problem is correctly representing mannequin parameters as integers in SecAgg’s finite group with integer modular arithmetic, which might inflate the norm of the discretized mannequin and require extra noise for a similar privateness stage. For instance, randomized rounding to the closest integers might inflate the person’s contribution by an element equal to the variety of mannequin parameters. We addressed this by scaling the mannequin parameters, making use of a random rotation, and rounding to nearest integers. We additionally developed an strategy for auto-tuning the discretization scale throughout coaching. This led to an much more environment friendly and correct integration between DP and SecAgg.

- Optimized discrete noise addition: One other problem is devising a scheme for selecting an arbitrary variety of bits per mannequin parameter with out sacrificing end-to-end privateness ensures, which rely upon how the mannequin updates are clipped and noised. To handle this, we added integer noise within the discretized area and analyzed the DP properties of sums of integer noise vectors utilizing the distributed discrete Gaussian and distributed Skellam mechanisms.

|

| An outline of federated studying with distributed differential privateness. |

We examined our DDP answer on quite a lot of benchmark datasets and in manufacturing and validated that we are able to match the accuracy to central DP with a SecAgg finite group of measurement 12 bits per mannequin parameter. This meant that we had been capable of obtain added privateness benefits whereas additionally decreasing reminiscence and communication bandwidth. To display this, we utilized this know-how to coach and launch Sensible Textual content Choice fashions. This was carried out with an acceptable quantity of noise chosen to keep up mannequin high quality. All Sensible Textual content Choice fashions educated with federated studying now include DDP ensures that apply to each the mannequin updates and metrics seen by the server throughout coaching. We’ve got additionally open sourced the implementation in TensorFlow Federated.

Empirical privateness testing

Whereas DDP provides formal privateness ensures to Sensible Textual content Choice, these formal ensures are comparatively weak (a finite however giant ε, within the a whole lot). Nevertheless, any finite ε is an enchancment over a mannequin with no formal privateness assure for a number of causes: 1) A finite ε strikes the mannequin right into a regime the place additional privateness enhancements might be quantified; and a couple of) even giant ε’s can point out a considerable lower within the capacity to reconstruct coaching information from the educated mannequin. To get a extra concrete understanding of the empirical privateness benefits, we carried out thorough analyses by making use of the Secret Sharer framework to Sensible Textual content Choice fashions. Secret Sharer is a mannequin auditing method that can be utilized to measure the diploma to which fashions unintentionally memorize their coaching information.

To carry out Secret Sharer analyses for Sensible Textual content Choice, we arrange management experiments which accumulate gradients utilizing SecAgg. The remedy experiments use distributed differential privateness aggregators with completely different quantities of noise.

We discovered that even low quantities of noise cut back memorization meaningfully, greater than doubling the Secret Sharer rank metric for related canaries in comparison with the baseline. Which means that although the DP ε is giant, we empirically verified that these quantities of noise already assist cut back memorization for this mannequin. Nevertheless, to additional enhance on this and to get stronger formal ensures, we goal to make use of even bigger noise multipliers sooner or later.

Subsequent steps

We developed and deployed the primary federated studying and distributed differential privateness system that comes with formal DP ensures with respect to an honest-but-curious server. Whereas providing substantial further protections, a completely malicious server may nonetheless be capable to get across the DDP ensures both by manipulating the general public key change of SecAgg or by injecting a adequate variety of “faux” malicious purchasers that don’t add the prescribed noise into the aggregation pool. We’re excited to handle these challenges by persevering with to strengthen the DP assure and its scope.

Acknowledgements

The authors wish to thank Adria Gascon for important influence on the weblog publish itself, in addition to the individuals who helped develop these concepts and convey them to apply: Ken Liu, Jakub Konečný, Brendan McMahan, Naman Agarwal, Thomas Steinke, Christopher Choquette, Adria Gascon, James Bell, Zheng Xu, Asela Gunawardana, Kallista Bonawitz, Mariana Raykova, Stanislav Chiknavaryan, Tancrède Lepoint, Shanshan Wu, Yu Xiao, Zachary Charles, Chunxiang Zheng, Daniel Ramage, Galen Andrew, Hugo Tune, Chang Li, Sofia Neata, Ananda Theertha Suresh, Timon Van Overveldt, Zachary Garrett, Wennan Zhu, and Lukas Zilka. We’d additionally prefer to thank Tom Small for creating the animated determine.