Pure language permits versatile descriptive queries about photographs. The interplay between textual content queries and pictures grounds linguistic which means within the visible world, facilitating a greater understanding of object relationships, human intentions in direction of objects, and interactions with the atmosphere. The analysis neighborhood has studied object-level visible grounding by way of a variety of duties, together with referring expression comprehension, text-based localization, and extra broadly object detection, every of which require completely different abilities in a mannequin. For instance, object detection seeks to seek out all objects from a predefined set of courses, which requires correct localization and classification, whereas referring expression comprehension localizes an object from a referring textual content and sometimes requires complicated reasoning on distinguished objects. On the intersection of the 2 is text-based localization, wherein a easy category-based textual content question prompts the mannequin to detect the objects of curiosity.

Resulting from their dissimilar activity properties, referring expression comprehension, detection, and text-based localization are largely studied by way of separate benchmarks with most fashions solely devoted to 1 activity. Because of this, current fashions haven’t adequately synthesized data from the three duties to realize a extra holistic visible and linguistic understanding. Referring expression comprehension fashions, as an illustration, are educated to foretell one object per picture, and sometimes wrestle to localize a number of objects, reject adverse queries, or detect novel classes. As well as, detection fashions are unable to course of textual content inputs, and text-based localization fashions usually wrestle to course of complicated queries that refer to 1 object occasion, akin to “Left half sandwich.” Lastly, not one of the fashions can generalize sufficiently properly past their coaching knowledge and classes.

To handle these limitations, we’re presenting “FindIt: Generalized Localization with Pure Language Queries” at ECCV 2022. Right here we suggest a unified, general-purpose and multitask visible grounding mannequin, referred to as FindIt, that may flexibly reply various kinds of grounding and detection queries. Key to this structure is a multi-level cross-modality fusion module that may carry out complicated reasoning for referring expression comprehension and concurrently acknowledge small and difficult objects for text-based localization and detection. As well as, we uncover that a normal object detector and detection losses are ample and surprisingly efficient for all three duties with out the necessity for task-specific design and losses widespread in current works. FindIt is straightforward, environment friendly, and outperforms different state-of-the-art fashions on the referring expression comprehension and text-based localization benchmarks, whereas being aggressive on the detection benchmark.

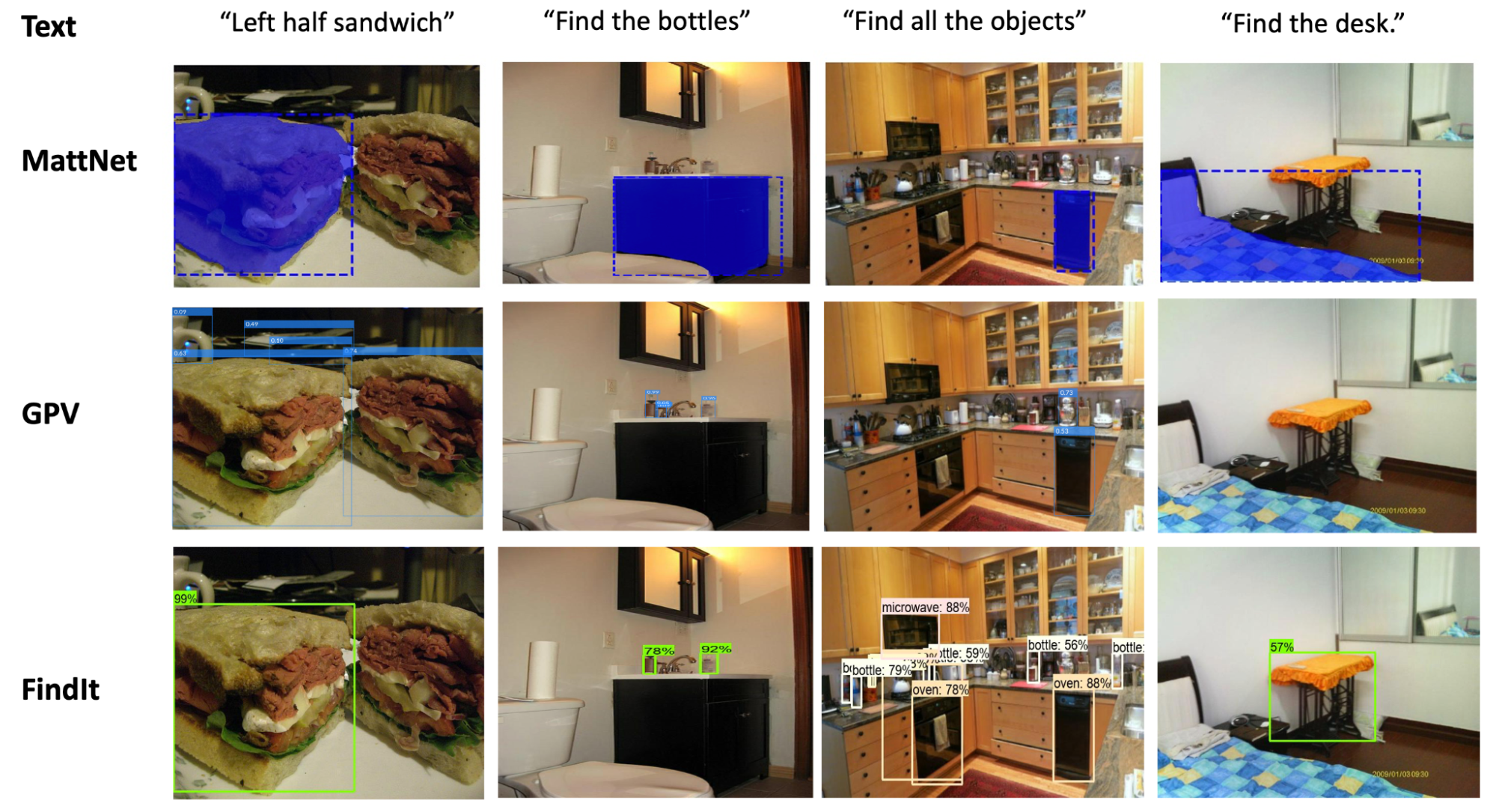

|

| FindIt is a unified mannequin for referring expression comprehension (col. 1), text-based localization (col. 2), and the item detection activity (col. 3). FindIt can reply precisely when examined on object varieties/courses not recognized throughout coaching, e.g. “Discover the desk” (col. 4). In comparison with current baselines (MattNet and GPV), FindIt can carry out these duties properly and in a single mannequin. |

Multi-level Picture-Textual content Fusion

Completely different localization duties are created with completely different semantic understanding targets. For instance, as a result of the referring expression activity primarily references distinguished objects within the picture reasonably than small, occluded or faraway objects, low decision photographs usually suffice. In distinction, the detection activity goals to detect objects with varied sizes and occlusion ranges in greater decision photographs. Other than these benchmarks, the overall visible grounding drawback is inherently multiscale, as pure queries can refer to things of any dimension. This motivates the necessity for a multi-level image-text fusion mannequin for environment friendly processing of upper decision photographs over completely different localization duties.

The premise of FindIt is to fuse the upper stage semantic options utilizing extra expressive transformer layers, which might seize all-pair interactions between picture and textual content. For the lower-level and higher-resolution options, we use a less expensive dot-product fusion to avoid wasting computation and reminiscence value. We connect a detector head (e.g., Quicker R-CNN) on high of the fused characteristic maps to foretell the bins and their courses.

|

| FindIt accepts a picture and a question textual content as inputs, and processes them individually in picture/textual content backbones earlier than making use of the multi-level fusion. We feed the fused options to Quicker R-CNN to foretell the bins referred to by the textual content. The characteristic fusion makes use of extra expressive transformers at greater ranges and cheaper dot-product on the decrease ranges. |

Multitask Studying

Other than the multi-level fusion described above, we adapt the text-based localization and detection duties to take the identical inputs because the referring expression comprehension activity. For the text-based localization activity, we generate a set of queries over the classes current within the picture. For any current class, the textual content question takes the shape “Discover the [object],” the place [object] is the class title. The objects akin to that class are labeled as foreground and the opposite objects as background. As a substitute of utilizing the aforementioned immediate, we use a static immediate for the detection activity, akin to “Discover all of the objects.”. We discovered that the precise selection of prompts just isn’t necessary for text-based localization and detection duties.

After adaptation, all duties in consideration share the identical inputs and outputs — a picture enter, a textual content question, and a set of output bounding bins and courses. We then mix the datasets and prepare on the combination. Lastly, we use the usual object detection losses for all duties, which we discovered to be surprisingly easy and efficient.

Analysis

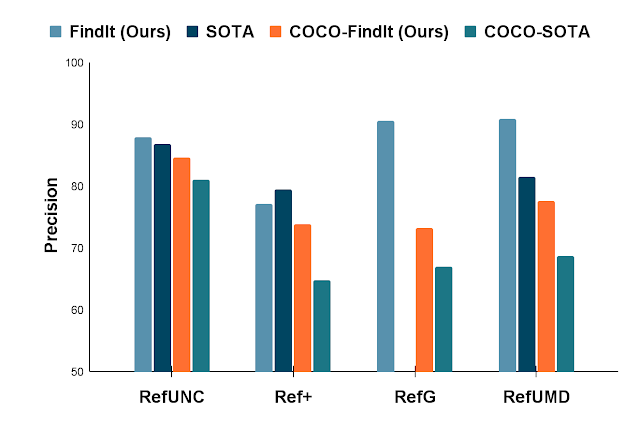

We apply FindIt to the favored RefCOCO benchmark for referring expression comprehension duties. When solely the COCO and RefCOCO dataset is accessible, FindIt outperforms the state-of-the-art-model on all duties. Within the settings the place exterior datasets are allowed, FindIt units a brand new cutting-edge by utilizing COCO and all RefCOCO splits collectively (no different datasets). On the difficult Google and UMD splits, FindIt outperforms the cutting-edge by a ten% margin, which, taken collectively, reveal the advantages of multitask studying.

|

| Comparability with the cutting-edge on the favored referring expression benchmark. FindIt is superior on each the COCO and unconstrained settings (extra coaching knowledge allowed). |

On the text-based localization benchmark, FindIt achieves 79.7%, greater than the GPV (73.0%), and Quicker R-CNN baselines (75.2%). Please check with the paper for extra quantitative analysis.

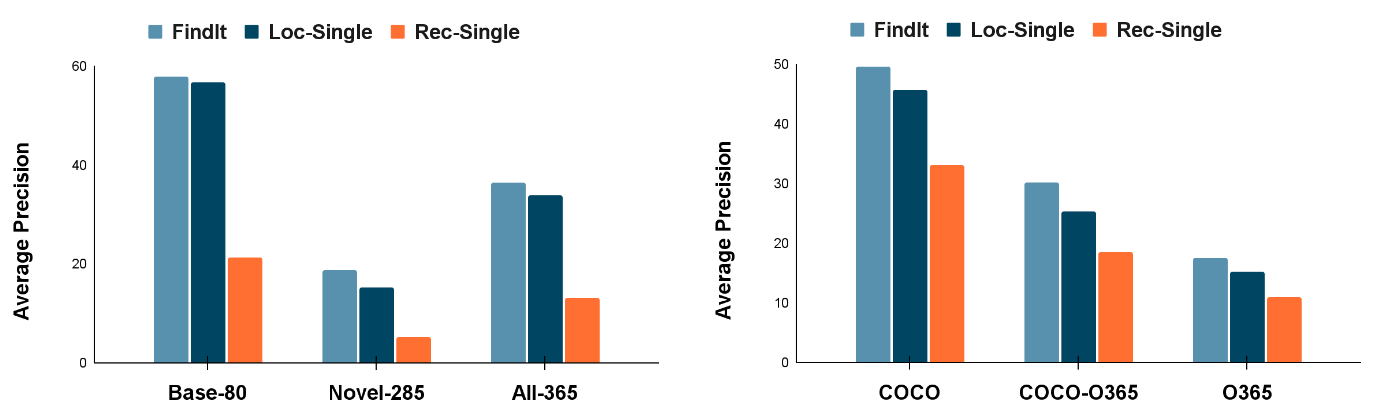

We additional observe that FindIt generalizes higher to novel classes and super-categories within the text-based localization activity in comparison with aggressive single-task baselines on the favored COCO and Objects365 datasets, proven within the determine beneath.

Effectivity

We additionally benchmark the inference occasions on the referring expression comprehension activity (see Desk beneath). FindIt is environment friendly and comparable with current one-stage approaches whereas attaining greater accuracy. For honest comparability, all working occasions are measured on one GTX 1080Ti GPU.

| Mannequin | Picture Measurement | Spine | Runtime (ms) | |||

| MattNet | 1000 | R101 | 378 | |||

| FAOA | 256 | DarkNet53 | 39 | |||

| MCN | 416 | DarkNet53 | 56 | |||

| TransVG | 640 | R50 | 62 | |||

| FindIt (Ours) | 640 | R50 | 107 | |||

| FindIt (Ours) | 384 | R50 | 57 |

Conclusion

We current Findit, which unifies referring expression comprehension, text-based localization, and object detection duties. We suggest multi-scale cross-attention to unify the varied localization necessities of those duties. With none task-specific design, FindIt surpasses the cutting-edge on referring expression and text-based localization, exhibits aggressive efficiency on detection, and generalizes higher to out-of-distribution knowledge and novel courses. All of those are completed in a single, unified, and environment friendly mannequin.

Acknowledgements

This work is performed by Weicheng Kuo, Fred Bertsch, Wei Li, AJ Piergiovanni, Mohammad Saffar, and Anelia Angelova. We wish to thank Ashish Vaswani, Prajit Ramachandran, Niki Parmar, David Luan, Tsung-Yi Lin, and different colleagues at Google Analysis for his or her recommendation and useful discussions. We wish to thank Tom Small for getting ready the animation.