Empowering end-users to interactively train robots to carry out novel duties is an important functionality for his or her profitable integration into real-world functions. For instance, a consumer could wish to train a robotic canine to carry out a brand new trick, or train a manipulator robotic learn how to manage a lunch field primarily based on consumer preferences. The latest developments in giant language fashions (LLMs) pre-trained on in depth web knowledge have proven a promising path in the direction of attaining this objective. Certainly, researchers have explored various methods of leveraging LLMs for robotics, from step-by-step planning and goal-oriented dialogue to robot-code-writing brokers.

Whereas these strategies impart new modes of compositional generalization, they concentrate on utilizing language to hyperlink collectively new behaviors from an current library of management primitives which might be both manually engineered or realized a priori. Regardless of having inside information about robotic motions, LLMs battle to immediately output low-level robotic instructions as a result of restricted availability of related coaching knowledge. Because of this, the expression of those strategies are bottlenecked by the breadth of the accessible primitives, the design of which frequently requires in depth skilled information or large knowledge assortment.

In “Language to Rewards for Robotic Talent Synthesis”, we suggest an strategy to allow customers to show robots novel actions by pure language enter. To take action, we leverage reward capabilities as an interface that bridges the hole between language and low-level robotic actions. We posit that reward capabilities present a great interface for such duties given their richness in semantics, modularity, and interpretability. In addition they present a direct connection to low-level insurance policies by black-box optimization or reinforcement studying (RL). We developed a language-to-reward system that leverages LLMs to translate pure language consumer directions into reward-specifying code after which applies MuJoCo MPC to seek out optimum low-level robotic actions that maximize the generated reward operate. We show our language-to-reward system on a wide range of robotic management duties in simulation utilizing a quadruped robotic and a dexterous manipulator robotic. We additional validate our technique on a bodily robotic manipulator.

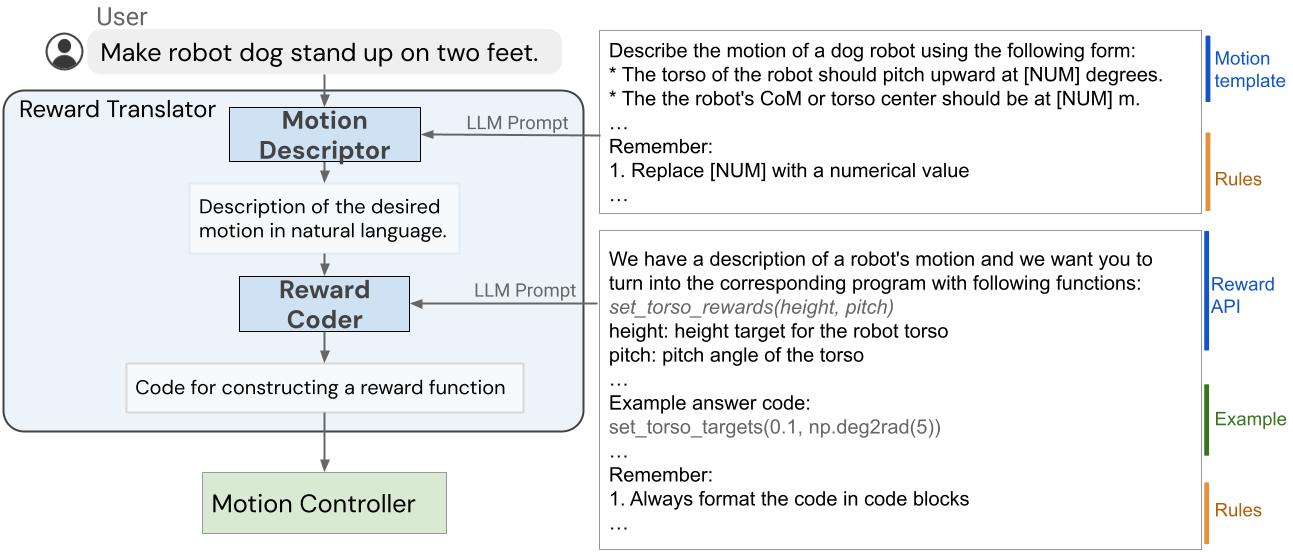

The language-to-reward system consists of two core elements: (1) a Reward Translator, and (2) a Movement Controller. The Reward Translator maps pure language instruction from customers to reward capabilities represented as python code. The Movement Controller optimizes the given reward operate utilizing receding horizon optimization to seek out the optimum low-level robotic actions, equivalent to the quantity of torque that needs to be utilized to every robotic motor.

Reward Translator: Translating consumer directions to reward capabilities

The Reward Translator module was constructed with the objective of mapping pure language consumer directions to reward capabilities. Reward tuning is very domain-specific and requires skilled information, so it was not shocking to us after we discovered that LLMs skilled on generic language datasets are unable to immediately generate a reward operate for a particular {hardware}. To deal with this, we apply the in-context studying means of LLMs. Moreover, we cut up the Reward Translator into two sub-modules: Movement Descriptor and Reward Coder.

Movement Descriptor

First, we design a Movement Descriptor that interprets enter from a consumer and expands it right into a pure language description of the specified robotic movement following a predefined template. This Movement Descriptor turns probably ambiguous or obscure consumer directions into extra particular and descriptive robotic motions, making the reward coding job extra secure. Furthermore, customers work together with the system by the movement description discipline, so this additionally offers a extra interpretable interface for customers in comparison with immediately exhibiting the reward operate.

To create the Movement Descriptor, we use an LLM to translate the consumer enter into an in depth description of the specified robotic movement. We design prompts that information the LLMs to output the movement description with the correct quantity of particulars and format. By translating a obscure consumer instruction right into a extra detailed description, we’re capable of extra reliably generate the reward operate with our system. This concept can be probably utilized extra usually past robotics duties, and is related to Inside-Monologue and chain-of-thought prompting.

Reward Coder

Within the second stage, we use the identical LLM from Movement Descriptor for Reward Coder, which interprets generated movement description into the reward operate. Reward capabilities are represented utilizing python code to learn from the LLMs’ information of reward, coding, and code construction.

Ideally, we want to use an LLM to immediately generate a reward operate R (s, t) that maps the robotic state s and time t right into a scalar reward worth. Nonetheless, producing the proper reward operate from scratch remains to be a difficult downside for LLMs and correcting the errors requires the consumer to grasp the generated code to offer the appropriate suggestions. As such, we pre-define a set of reward phrases which might be generally used for the robotic of curiosity and permit LLMs to composite completely different reward phrases to formulate the ultimate reward operate. To realize this, we design a immediate that specifies the reward phrases and information the LLM to generate the proper reward operate for the duty.

|

| The inner construction of the Reward Translator, which is tasked to map consumer inputs to reward capabilities. |

Movement Controller: Translating reward capabilities to robotic actions

The Movement Controller takes the reward operate generated by the Reward Translator and synthesizes a controller that maps robotic commentary to low-level robotic actions. To do that, we formulate the controller synthesis downside as a Markov determination course of (MDP), which may be solved utilizing completely different methods, together with RL, offline trajectory optimization, or mannequin predictive management (MPC). Particularly, we use an open-source implementation primarily based on the MuJoCo MPC (MJPC).

MJPC has demonstrated the interactive creation of various behaviors, equivalent to legged locomotion, greedy, and finger-gaiting, whereas supporting a number of planning algorithms, equivalent to iterative linear–quadratic–Gaussian (iLQG) and predictive sampling. Extra importantly, the frequent re-planning in MJPC empowers its robustness to uncertainties within the system and permits an interactive movement synthesis and correction system when mixed with LLMs.

Examples

Robotic canine

Within the first instance, we apply the language-to-reward system to a simulated quadruped robotic and train it to carry out numerous abilities. For every ability, the consumer will present a concise instruction to the system, which is able to then synthesize the robotic movement by utilizing reward capabilities as an intermediate interface.

Dexterous manipulator

We then apply the language-to-reward system to a dexterous manipulator robotic to carry out a wide range of manipulation duties. The dexterous manipulator has 27 levels of freedom, which may be very difficult to regulate. Many of those duties require manipulation abilities past greedy, making it troublesome for pre-designed primitives to work. We additionally embrace an instance the place the consumer can interactively instruct the robotic to position an apple inside a drawer.

Validation on actual robots

We additionally validate the language-to-reward technique utilizing a real-world manipulation robotic to carry out duties equivalent to selecting up objects and opening a drawer. To carry out the optimization in Movement Controller, we use AprilTag, a fiducial marker system, and F-VLM, an open-vocabulary object detection software, to establish the place of the desk and objects being manipulated.

Conclusion

On this work, we describe a brand new paradigm for interfacing an LLM with a robotic by reward capabilities, powered by a low-level mannequin predictive management software, MuJoCo MPC. Utilizing reward capabilities because the interface permits LLMs to work in a semantic-rich area that performs to the strengths of LLMs, whereas guaranteeing the expressiveness of the ensuing controller. To additional enhance the efficiency of the system, we suggest to make use of a structured movement description template to raised extract inside information about robotic motions from LLMs. We show our proposed system on two simulated robotic platforms and one actual robotic for each locomotion and manipulation duties.

Acknowledgements

We want to thank our co-authors Nimrod Gileadi, Chuyuan Fu, Sean Kirmani, Kuang-Huei Lee, Montse Gonzalez Arenas, Hao-Tien Lewis Chiang, Tom Erez, Leonard Hasenclever, Brian Ichter, Ted Xiao, Peng Xu, Andy Zeng, Tingnan Zhang, Nicolas Heess, Dorsa Sadigh, Jie Tan, and Yuval Tassa for his or her assist and assist in numerous facets of the undertaking. We’d additionally prefer to acknowledge Ken Caluwaerts, Kristian Hartikainen, Steven Bohez, Carolina Parada, Marc Toussaint, and the higher groups at Google DeepMind for his or her suggestions and contributions.