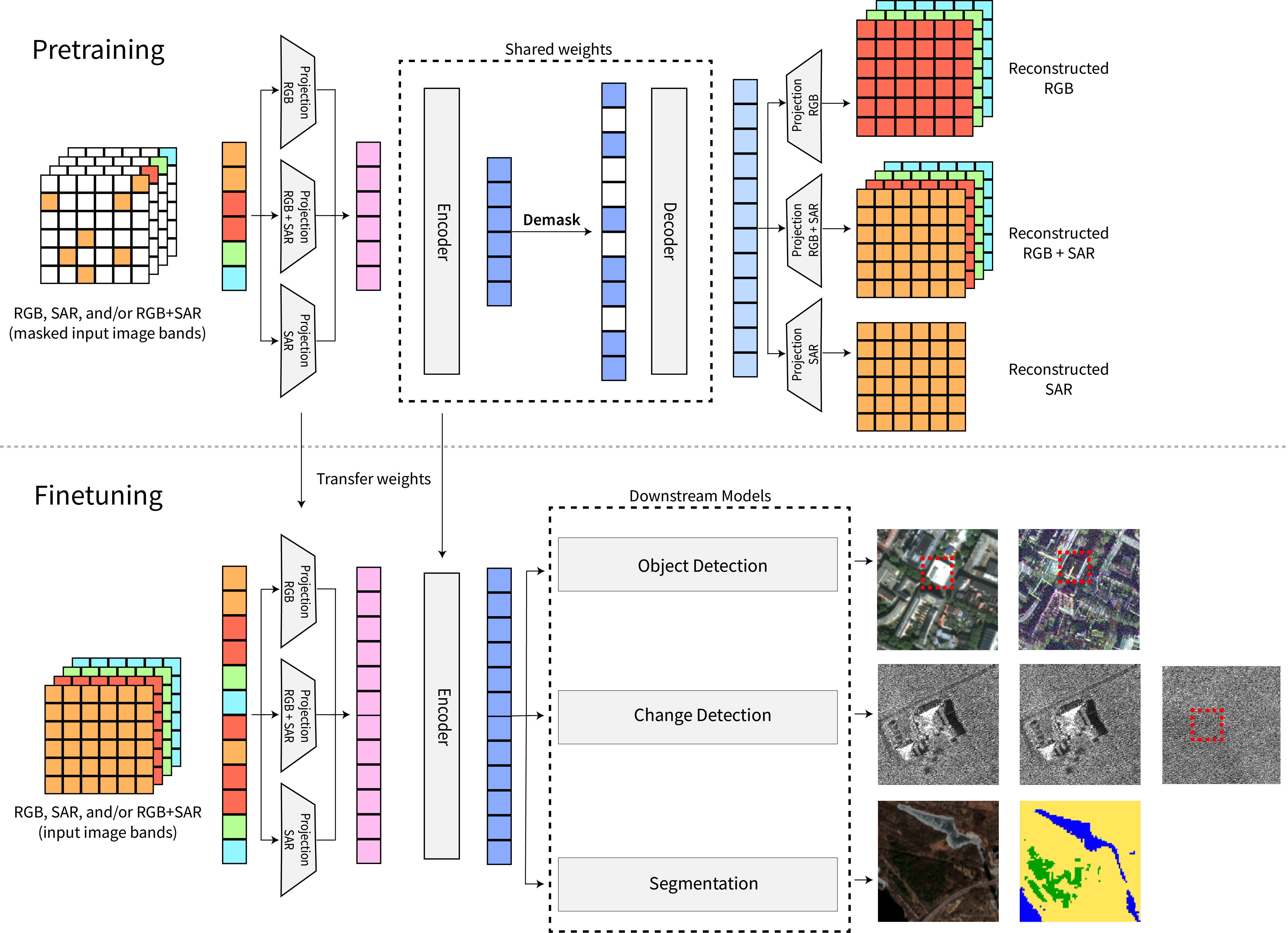

Determine 1: Abstract of our suggestions for when a practitioner ought to BC and numerous imitation studying fashion strategies, and when they need to use offline RL approaches.

Offline reinforcement studying permits studying insurance policies from beforehand collected knowledge, which has profound implications for making use of RL in domains the place operating trial-and-error studying is impractical or harmful, comparable to safety-critical settings like autonomous driving or medical therapy planning. In such situations, on-line exploration is just too dangerous, however offline RL strategies can be taught efficient insurance policies from logged knowledge collected by people or heuristically designed controllers. Prior learning-based management strategies have additionally approached studying from present knowledge as imitation studying: if the info is mostly “ok,” merely copying the conduct within the knowledge can result in good outcomes, and if it’s not ok, then filtering or reweighting the info after which copying can work properly. A number of latest works recommend that it is a viable different to fashionable offline RL strategies.

This brings about a number of questions: when ought to we use offline RL? Are there elementary limitations to strategies that depend on some type of imitation (BC, conditional BC, filtered BC) that offline RL addresses? Whereas it is perhaps clear that offline RL ought to take pleasure in a big benefit over imitation studying when studying from numerous datasets that include plenty of suboptimal conduct, we will even talk about how even circumstances which may appear BC-friendly can nonetheless permit offline RL to achieve considerably higher outcomes. Our objective is to assist clarify when and why you must use every technique and supply steering to practitioners on the advantages of every strategy. Determine 1 concisely summarizes our findings and we’ll talk about every part.

Strategies for Studying from Offline Knowledge

Let’s begin with a short recap of varied strategies for studying insurance policies from knowledge that we’ll talk about. The training algorithm is supplied with an offline dataset (mathcal{D}), consisting of trajectories ({tau_i}_{i=1}^N) generated by some conduct coverage. Most offline RL strategies carry out some kind of dynamic programming (e.g., Q-learning) updates on the supplied knowledge, aiming to acquire a worth perform. This sometimes requires adjusting for distributional shift to work properly, however when that is finished correctly, it results in good outcomes.

Alternatively, strategies primarily based on imitation studying try to easily clone the actions noticed within the dataset if the dataset is nice sufficient, or carry out some form of filtering or conditioning to extract helpful conduct when the dataset is just not good. As an example, latest work filters trajectories primarily based on their return, or immediately filters particular person transitions primarily based on how advantageous these might be below the conduct coverage after which clones them. Conditional BC strategies are primarily based on the concept that each transition or trajectory is perfect when conditioned on the appropriate variable. This manner, after conditioning, the info turns into optimum given the worth of the conditioning variable, and in precept we might then situation on the specified process, comparable to a excessive reward worth, and get a near-optimal trajectory. For instance, a trajectory that attains a return of (R_0) is optimum if our objective is to achieve return (R = R_0) (RCPs, resolution transformer); a trajectory that reaches objective (g) is perfect for reaching (g=g_0) (GCSL, RvS). Thus, one can carry out carry out reward-conditioned BC or goal-conditioned BC, and execute the realized insurance policies with the specified worth of return or objective throughout analysis. This strategy to offline RL bypasses studying worth features or dynamics fashions fully, which might make it less complicated to make use of. Nevertheless, does it really resolve the final offline RL downside?

What We Already Know About RL vs Imitation Strategies

Maybe a superb place to start out our dialogue is to evaluate the efficiency of offline RL and imitation-style strategies on benchmark duties. Within the determine beneath, we evaluate the efficiency of some latest strategies for studying from offline knowledge on a subset of the D4RL benchmark.

Desk 1: Dichotomy of empirical outcomes on a number of duties in D4RL. Whereas imitation-style strategies (resolution transformer, %BC, one-step RL, conditional BC) carry out at par with and may outperform offline RL strategies (CQL, IQL) on the locomotion duties, these strategies merely break down on the extra advanced maze navigation duties.

Observe within the desk that whereas imitation-style strategies carry out at par with offline RL strategies throughout the span of the locomotion duties, offline RL approaches vastly outperform these strategies (besides, goal-conditioned BC, which we’ll talk about in direction of the tip of this submit) by a big margin on the antmaze duties. What explains this distinction? As we’ll talk about on this weblog submit, strategies that depend on imitation studying are sometimes fairly efficient when the conduct within the offline dataset consists of some full trajectories that carry out properly. That is true for many replay-buffer fashion datasets, and the entire locomotion datasets in D4RL are generated from replay buffers of on-line RL algorithms. In such circumstances, merely filtering good trajectories, and executing the mode of the filtered trajectories will work properly. This explains why %BC, one-step RL and resolution transformer work fairly properly. Nevertheless, offline RL strategies can vastly outperform BC strategies when this stringent requirement is just not met as a result of they profit from a type of “temporal compositionality” which allows them to be taught from suboptimal knowledge. This explains the large distinction between RL and imitation outcomes on the antmazes.

Offline RL Can Clear up Issues that Conditional, Filtered or Weighted BC Can’t

To know why offline RL can resolve issues that the aforementioned BC strategies can not, let’s floor our dialogue in a easy, didactic instance. Let’s take into account the navigation process proven within the determine beneath, the place the objective is to navigate from the beginning location A to the objective location D within the maze. That is immediately consultant of a number of real-world decision-making situations in cell robotic navigation and supplies an summary mannequin for an RL downside in domains comparable to robotics or recommender programs. Think about you might be supplied with knowledge that reveals how the agent can navigate from location A to B and the way it can navigate from C to E, however no single trajectory within the dataset goes from A to D. Clearly, the offline dataset proven beneath supplies sufficient info for locating a approach to navigate to D: by combining totally different paths that cross one another at location E. However, can numerous offline studying strategies discover a approach to go from A to D?

Determine 2: Illustration of the bottom case of temporal compositionality or stitching that’s wanted discover optimum trajectories in numerous downside domains.

It seems that, whereas offline RL strategies are in a position to uncover the trail from A to D, numerous imitation-style strategies can not. It is because offline RL algorithms can “sew” suboptimal trajectories collectively: whereas the trajectories (tau_i) within the offline dataset may attain poor return, a greater coverage could be obtained by combining good segments of trajectories (A→E + E→D = A→D). This capacity to sew segments of trajectories temporally is the hallmark of value-based offline RL algorithms that make the most of Bellman backups, however cloning (a subset of) the info or trajectory-level sequence fashions are unable to extract this info, since such no single trajectory from A to D is noticed within the offline dataset!

Why do you have to care about stitching and these mazes? One may now surprise if this stitching phenomenon is simply helpful in some esoteric edge circumstances or whether it is an precise, practically-relevant phenomenon. Actually stitching seems very explicitly in multi-stage robotic manipulation duties and in addition in navigation duties. Nevertheless, stitching is just not restricted to only these domains — it seems that the necessity for stitching implicitly seems even in duties that don’t seem to include a maze. In observe, efficient insurance policies would usually require discovering an “excessive” however high-rewarding motion, very totally different from an motion that the conduct coverage would prescribe, at each state and studying to sew such actions to acquire a coverage that performs properly general. This type of implicit stitching seems in lots of sensible purposes: for instance, one may wish to discover an HVAC management coverage that minimizes the carbon footprint of a constructing with a dataset collected from distinct management insurance policies run traditionally in numerous buildings, every of which is suboptimal in a single method or the opposite. On this case, one can nonetheless get a a lot better coverage by stitching excessive actions at each state. Usually this implicit type of stitching is required in circumstances the place we want to discover actually good insurance policies that maximize a steady worth (e.g., maximize rider consolation in autonomous driving; maximize earnings in automated inventory buying and selling) utilizing a dataset collected from a combination of suboptimal insurance policies (e.g., knowledge from totally different human drivers; knowledge from totally different human merchants who excel and underperform below totally different conditions) that by no means execute excessive actions at every resolution. Nevertheless, by stitching such excessive actions at every resolution, one can acquire a a lot better coverage. Due to this fact, naturally succeeding at many issues requires studying to both explicitly or implicitly sew trajectories, segments and even single selections, and offline RL is nice at it.

The following pure query to ask is: Can we resolve this difficulty by including an RL-like part in BC strategies? One recently-studied strategy is to carry out a restricted variety of coverage enchancment steps past conduct cloning. That’s, whereas full offline RL performs a number of rounds of coverage enchancment untill we discover an optimum coverage, one can simply discover a coverage by operating one step of coverage enchancment past behavioral cloning. This coverage enchancment is carried out by incorporating some kind of a worth perform, and one may hope that using some type of Bellman backup equips the strategy with the flexibility to “sew”. Sadly, even this strategy is unable to totally shut the hole towards offline RL. It is because whereas the one-step strategy can sew trajectory segments, it could usually find yourself stitching the mistaken segments! One step of coverage enchancment solely myopically improves the coverage, with out bearing in mind the affect of updating the coverage on the longer term outcomes, the coverage could fail to determine actually optimum conduct. For instance, in our maze instance proven beneath, it’d seem higher for the agent to discover a answer that decides to go upwards and attain mediocre reward in comparison with going in direction of the objective, since below the conduct coverage going downwards may seem extremely suboptimal.

Determine 3: Imitation-style strategies that solely carry out a restricted steps of coverage enchancment should fall prey to picking suboptimal actions, as a result of the optimum motion assuming that the agent will observe the conduct coverage sooner or later may very well not be optimum for the total sequential resolution making downside.

Is Offline RL Helpful When Stitching is Not a Main Concern?

To this point, our evaluation reveals that offline RL strategies are higher because of good “stitching” properties. However one may surprise, if stitching is important when supplied with good knowledge, comparable to demonstration knowledge in robotics or knowledge from good insurance policies in healthcare. Nevertheless, in our latest paper, we discover that even when temporal compositionality is just not a major concern, offline RL does present advantages over imitation studying.

Offline RL can educate the agent what to “not do”. Maybe one of many largest advantages of offline RL algorithms is that operating RL on noisy datasets generated from stochastic insurance policies cannot solely educate the agent what it ought to do to maximise return, but additionally what shouldn’t be finished and the way actions at a given state would affect the possibility of the agent ending up in undesirable situations sooner or later. In distinction, any type of conditional or weighted BC which solely educate the coverage “do X”, with out explicitly discouraging notably low-rewarding or unsafe conduct. That is particularly related in open-world settings comparable to robotic manipulation in numerous settings or making selections about affected person admission in an ICU, the place understanding what to not do very clearly is crucial. In our paper, we quantify the achieve of precisely inferring “what to not do and the way a lot it hurts” and describe this instinct pictorially beneath. Typically acquiring such noisy knowledge is simple — one might increase knowledgeable demonstration knowledge with further “negatives” or “faux knowledge” generated from a simulator (e.g., robotics, autonomous driving), or by first operating an imitation studying technique and making a dataset for offline RL that augments knowledge with analysis rollouts from the imitation realized coverage.

Determine 4: By leveraging noisy knowledge, offline RL algorithms can be taught to determine what shouldn’t be finished so as to explicitly keep away from areas of low reward, and the way the agent might be overly cautious a lot earlier than that.

Is offline RL helpful in any respect once I really have near-expert demonstrations? As the ultimate situation, let’s take into account the case the place we even have solely near-expert demonstrations — maybe, the proper setting for imitation studying. In such a setting, there is no such thing as a alternative for stitching or leveraging noisy knowledge to be taught what to not do. Can offline RL nonetheless enhance upon imitation studying? Sadly, one can present that, within the worst case, no algorithm can carry out higher than normal behavioral cloning. Nevertheless, if the duty admits some construction then offline RL insurance policies could be extra strong. For instance, if there are a number of states the place it’s simple to determine a superb motion utilizing reward info, offline RL approaches can shortly converge to a superb motion at such states, whereas a regular BC strategy that doesn’t make the most of rewards could fail to determine a superb motion, resulting in insurance policies which might be non-robust and fail to resolve the duty. Due to this fact, offline RL is a most well-liked choice for duties with an abundance of such “non-critical” states the place long-term reward can simply determine a superb motion. An illustration of this concept is proven beneath, and we formally show a theoretical consequence quantifying these intuitions within the paper.

Determine 5: An illustration of the thought of non-critical states: the abundance of states the place reward info can simply determine good actions at a given state may help offline RL — even when supplied with knowledgeable demonstrations — in comparison with normal BC, that doesn’t make the most of any form of reward info,

So, When Is Imitation Studying Helpful?

Our dialogue has to date highlighted that offline RL strategies could be strong and efficient in lots of situations the place conditional and weighted BC may fail. Due to this fact, we now search to grasp if conditional or weighted BC are helpful in sure downside settings. This query is simple to reply within the context of normal behavioral cloning, in case your knowledge consists of knowledgeable demonstrations that you simply want to mimic, normal behavioral cloning is a comparatively easy, sensible choice. Nevertheless this strategy fails when the info is noisy or suboptimal or when the duty modifications (e.g., when the distribution of preliminary states modifications). And offline RL should be most well-liked in settings with some construction (as we mentioned above). Some failures of BC could be resolved by using filtered BC — if the info consists of a combination of fine and unhealthy trajectories, filtering trajectories primarily based on return could be a good suggestion. Equally, one might use one-step RL if the duty doesn’t require any type of stitching. Nevertheless, in all of those circumstances, offline RL is perhaps a greater different particularly if the duty or the atmosphere satisfies some situations, and is perhaps price attempting at the very least.

Conditional BC performs properly on an issue when one can acquire a conditioning variable well-suited to a given process. For instance, empirical outcomes on the antmaze domains from latest work point out that conditional BC with a objective as a conditioning variable is sort of efficient in goal-reaching issues, nevertheless, conditioning on returns is just not (evaluate Conditional BC (objectives) vs Conditional BC (returns) in Desk 1). Intuitively, this “well-suited” conditioning variable basically allows stitching — for example, a navigation downside naturally decomposes right into a sequence of intermediate goal-reaching issues after which sew options to a cleverly chosen subset of intermediate goal-reaching issues to resolve the whole process. At its core, the success of conditional BC requires some area information concerning the compositionality construction within the process. Alternatively, offline RL strategies extract the underlying stitching construction by operating dynamic programming, and work properly extra usually. Technically, one might mix these concepts and make the most of dynamic programming to be taught a worth perform after which acquire a coverage by operating conditional BC with the worth perform because the conditioning variable, and this may work fairly properly (evaluate RCP-A to RCP-R right here, the place RCP-A makes use of a worth perform for conditioning; evaluate TT+Q and TT right here)!

In our dialogue to date, we’ve already studied settings such because the antmazes, the place offline RL strategies can considerably outperform imitation-style strategies because of stitching. We’ll now shortly talk about some empirical outcomes that evaluate the efficiency of offline RL and BC on duties the place we’re supplied with near-expert, demonstration knowledge.

Determine 6: Evaluating full offline RL (CQL) to imitation-style strategies (One-step RL and BC) averaged over 7 Atari video games, with knowledgeable demonstration knowledge and noisy-expert knowledge. Empirical particulars right here.

In our closing experiment, we evaluate the efficiency of offline RL strategies to imitation-style strategies on a mean over seven Atari video games. We use conservative Q-learning (CQL) as our consultant offline RL technique. Be aware that naively operating offline RL (“Naive CQL (Professional)”), with out correct cross-validation to stop overfitting and underfitting doesn’t enhance over BC. Nevertheless, offline RL outfitted with an inexpensive cross-validation process (“Tuned CQL (Professional)”) is ready to clearly enhance over BC. This highlights the necessity for understanding how offline RL strategies have to be tuned, and at the very least, partly explains the poor efficiency of offline RL when studying from demonstration knowledge in prior works. Incorporating a little bit of noisy knowledge that may inform the algorithm of what it shouldn’t do, additional improves efficiency (“CQL (Noisy Professional)” vs “BC (Professional)”) inside an similar knowledge price range. Lastly, notice that whereas one would anticipate that whereas one step of coverage enchancment could be fairly efficient, we discovered that it’s fairly delicate to hyperparameters and fails to enhance over BC considerably. These observations validate the findings mentioned earlier within the weblog submit. We talk about outcomes on different domains in our paper, that we encourage practitioners to take a look at.

On this weblog submit, we aimed to grasp if, when and why offline RL is a greater strategy for tackling a wide range of sequential decision-making issues. Our dialogue means that offline RL strategies that be taught worth features can leverage the advantages of sewing, which could be essential in lots of issues. Furthermore, there are even situations with knowledgeable or near-expert demonstration knowledge, the place operating offline RL is a good suggestion. We summarize our suggestions for practitioners in Determine 1, proven proper initially of this weblog submit. We hope that our evaluation improves the understanding of the advantages and properties of offline RL approaches.

This weblog submit is based totally on the paper:

When Ought to Offline RL Be Most well-liked Over Behavioral Cloning?

Aviral Kumar*, Joey Hong*, Anikait Singh, Sergey Levine [arxiv].

In Worldwide Convention on Studying Representations (ICLR), 2022.

As well as, the empirical outcomes mentioned within the weblog submit are taken from numerous papers, specifically from RvS and IQL.