Deep reinforcement studying (RL) continues to make nice strides in fixing real-world sequential decision-making issues akin to balloon navigation, nuclear physics, robotics, and video games. Regardless of its promise, one in all its limiting elements is lengthy coaching occasions. Whereas the present strategy to pace up RL coaching on complicated and tough duties leverages distributed coaching scaling as much as lots of and even hundreds of computing nodes, it nonetheless requires the usage of vital {hardware} sources which makes RL coaching costly, whereas growing its environmental impression. Nevertheless, latest work [1, 2] signifies that efficiency optimizations on current {hardware} can scale back the carbon footprint (i.e., complete greenhouse gasoline emissions) of coaching and inference.

RL may profit from comparable system optimization strategies that may scale back coaching time, enhance {hardware} utilization and scale back carbon dioxide (CO2) emissions. One such approach is quantization, a course of that converts full-precision floating level (FP32) numbers to decrease precision (int8) numbers after which performs computation utilizing the decrease precision numbers. Quantization can save reminiscence storage price and bandwidth for sooner and extra energy-efficient computation. Quantization has been efficiently utilized to supervised studying to allow edge deployments of machine studying (ML) fashions and obtain sooner coaching. Nevertheless, there stays a possibility to use quantization to RL coaching.

To that finish, we current “QuaRL: Quantization for Quick and Environmentally Sustainable

Reinforcement Studying”, printed within the Transactions of Machine Studying Analysis journal, which introduces a brand new paradigm referred to as ActorQ that applies quantization to hurry up RL coaching by 1.5-5.4x whereas sustaining efficiency. Moreover, we exhibit that in comparison with coaching in full-precision, the carbon footprint can also be considerably diminished by an element of 1.9-3.8x.

Making use of Quantization to RL Coaching

In conventional RL coaching, a learner coverage is utilized to an actor, which makes use of the coverage to discover the surroundings and acquire knowledge samples. The samples collected by the actor are then utilized by the learner to constantly refine the preliminary coverage. Periodically, the coverage educated on the learner aspect is used to replace the actor’s coverage. To use quantization to RL coaching, we develop the ActorQ paradigm. ActorQ performs the identical sequence described above, with one key distinction being that the coverage replace from learner to actors is quantized, and the actor explores the surroundings utilizing the int8 quantized coverage to gather samples.

Making use of quantization to RL coaching on this style has two key advantages. First, it reduces the reminiscence footprint of the coverage. For a similar peak bandwidth, much less knowledge is transferred between learners and actors, which reduces the communication price for coverage updates from learners to actors. Second, the actors carry out inference on the quantized coverage to generate actions for a given surroundings state. The quantized inference course of is far sooner when in comparison with performing inference in full precision.

|

| An summary of conventional RL coaching (left) and ActorQ RL coaching (proper). |

In ActorQ, we use the ACME distributed RL framework. The quantizer block performs uniform quantization that converts the FP32 coverage to int8. The actor performs inference utilizing optimized int8 computations. Although we use uniform quantization when designing the quantizer block, we consider that different quantization strategies can change uniform quantization and produce comparable outcomes. The samples collected by the actors are utilized by the learner to coach a neural community coverage. Periodically the discovered coverage is quantized by the quantizer block and broadcasted to the actors.

Quantization Improves RL Coaching Time and Efficiency

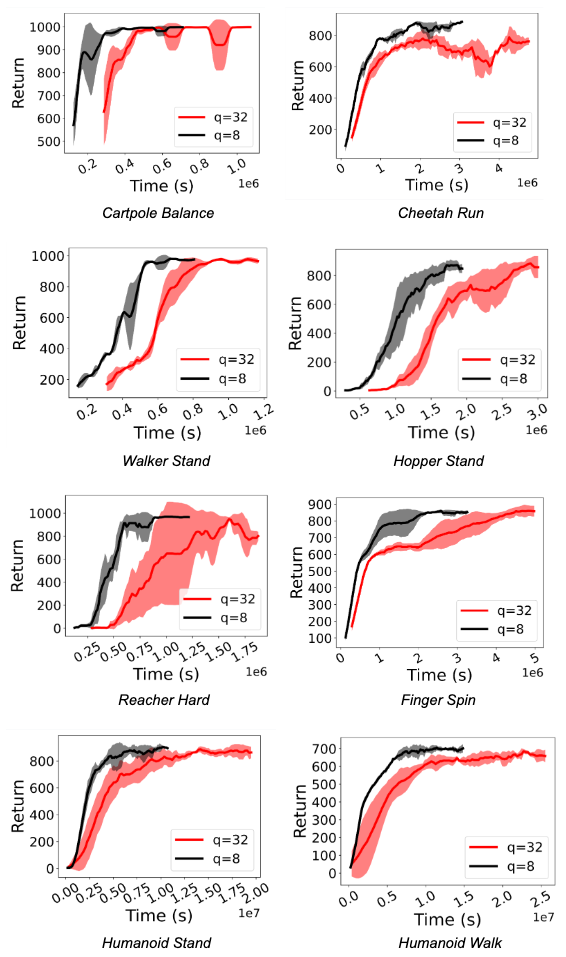

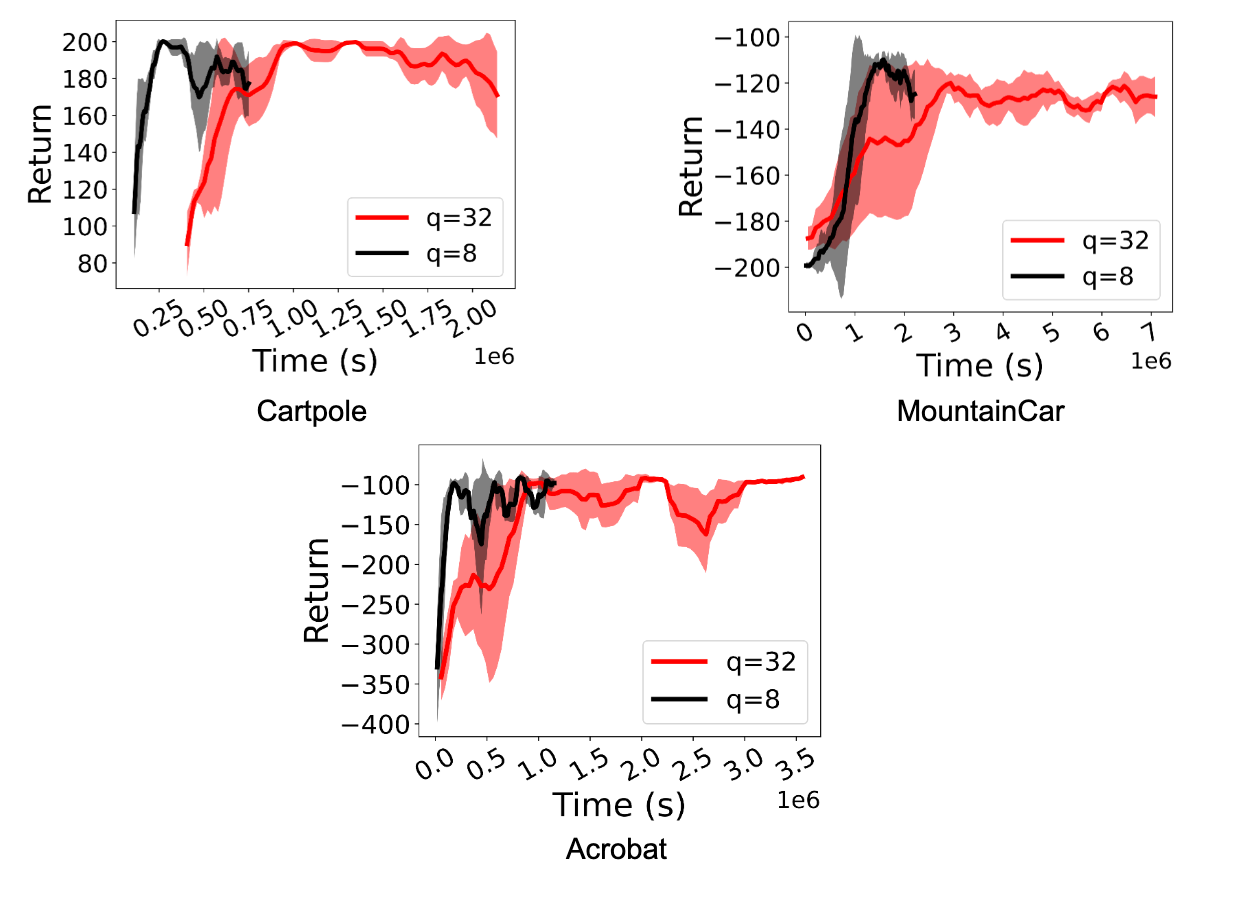

We consider ActorQ in a spread of environments, together with the Deepmind Management Suite and the OpenAI Gymnasium. We exhibit the speed-up and improved efficiency of D4PG and DQN. We selected D4PG because it was one of the best studying algorithm in ACME for Deepmind Management Suite duties, and DQN is a extensively used and normal RL algorithm.

We observe a major speedup (between 1.5x and 5.41x) in coaching RL insurance policies. Extra importantly, efficiency is maintained even when actors carry out int8 quantized inference. The figures under exhibit this for the D4PG and DQN brokers for Deepmind Management Suite and OpenAI Gymnasium duties.

Quantization Reduces Carbon Emission

Making use of quantization in RL utilizing ActorQ improves coaching time with out affecting efficiency. The direct consequence of utilizing the {hardware} extra effectively is a smaller carbon footprint. We measure the carbon footprint enchancment by taking the ratio of carbon emission when utilizing the FP32 coverage throughout coaching over the carbon emission when utilizing the int8 coverage throughout coaching.

In an effort to measure the carbon emission for the RL coaching experiment, we use the experiment-impact-tracker proposed in prior work. We instrument the ActorQ system with carbon monitor APIs to measure the vitality and carbon emissions for every coaching experiment.

In comparison with the carbon emission when working in full precision (FP32), we observe that the quantization of insurance policies reduces the carbon emissions anyplace from 1.9x to three.76x, relying on the duty. As RL techniques are scaled to run on hundreds of distributed {hardware} cores and accelerators, we consider that absolutely the carbon discount (measured in kilograms of CO2) might be fairly vital.

Conclusion and Future Instructions

We introduce ActorQ, a novel paradigm that applies quantization to RL coaching and achieves speed-up enhancements of 1.5-5.4x whereas sustaining efficiency. Moreover, we exhibit that ActorQ can scale back RL coaching’s carbon footprint by an element of 1.9-3.8x in comparison with coaching in full-precision with out quantization.

ActorQ demonstrates that quantization might be successfully utilized to many points of RL, from acquiring high-quality and environment friendly quantized insurance policies to lowering coaching occasions and carbon emissions. As RL continues to make nice strides in fixing real-world issues, we consider that making RL coaching sustainable will probably be essential for adoption. As we scale RL coaching to hundreds of cores and GPUs, even a 50% enchancment (as now we have experimentally demonstrated) will generate vital financial savings in absolute greenback price, vitality, and carbon emissions. Our work is step one towards making use of quantization to RL coaching to realize environment friendly and environmentally sustainable coaching.

Whereas our design of the quantizer in ActorQ relied on easy uniform quantization, we consider that different types of quantization, compression and sparsity might be utilized (e.g., distillation, sparsification, and so on.). We hope that future work will contemplate making use of extra aggressive quantization and compression strategies, which can yield further advantages to the efficiency and accuracy tradeoff obtained by the educated RL insurance policies.

Acknowledgments

We want to thank our co-authors Max Lam, Sharad Chitlangia, Zishen Wan, and Vijay Janapa Reddi (Harvard College), and Gabriel Barth-Maron (DeepMind), for his or her contribution to this work. We additionally thank the Google Cloud workforce for offering analysis credit to seed this work.

Really enjoyed this post. Much thanks again. Fantastic.

Keep up the amazing work!