To check its new method, Hugging Face estimated the general emissions for its personal giant language mannequin, BLOOM, which was launched earlier this 12 months. It was a course of that concerned including up numerous totally different numbers: the quantity of power used to coach the mannequin on a supercomputer, the power wanted to fabricate the supercomputer’s {hardware} and keep its computing infrastructure, and the power used to run BLOOM as soon as it had been deployed. The researchers calculated that closing half utilizing a software program software known as CodeCarbon, which tracked the carbon emissions BLOOM was producing in actual time over a interval of 18 days.

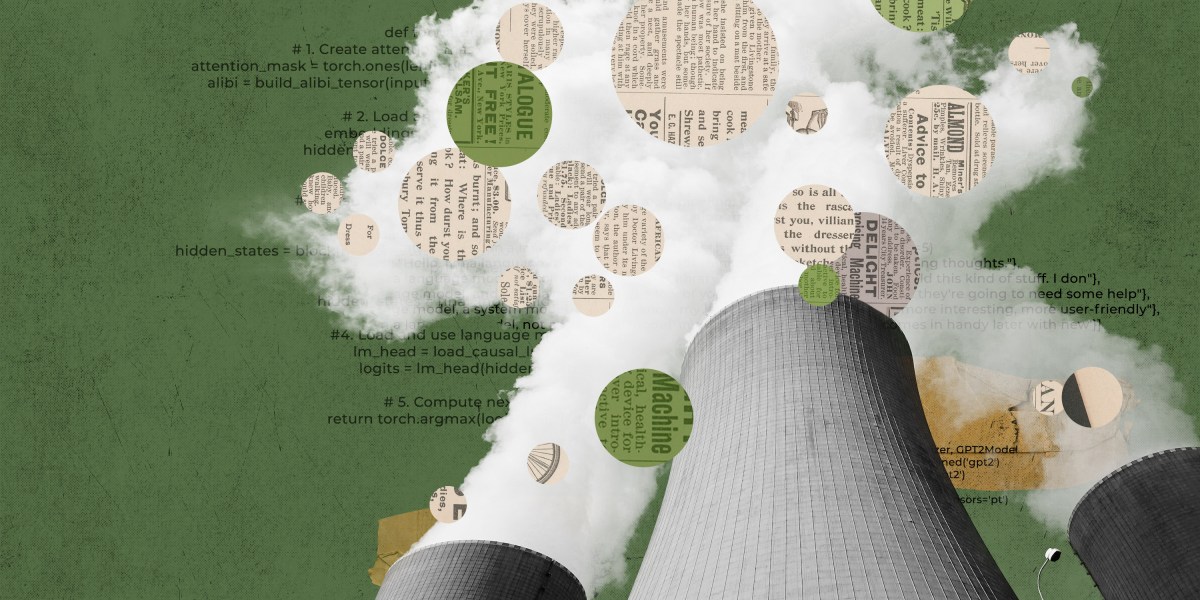

Hugging Face estimated that BLOOM’s coaching led to 25 metric tons of carbon emissions. However, the researchers discovered, that determine doubled after they took into consideration the emissions produced by the manufacturing of the pc tools used for coaching, the broader computing infrastructure, and the power required to truly run BLOOM as soon as it was skilled.

Whereas which will seem to be quite a bit for one mannequin—50 metric tons of carbon emissions is the equal of round 60 flights between London and New York—it is considerably lower than the emissions related to different LLMs of the identical dimension. It is because BLOOM was skilled on a French supercomputer that’s largely powered by nuclear power, which doesn’t produce carbon emissions. Fashions skilled in China, Australia, or some elements of the US, which have power grids that rely extra on fossil fuels, are more likely to be extra polluting.

After BLOOM was launched, Hugging Face estimated that utilizing the mannequin emitted round 19 kilograms of carbon dioxide per day, which has similarities to the emissions produced by driving round 54 miles in an common new automobile.

By means of comparability, OpenAI’s GPT-3 and Meta’s OPT have been estimated to emit greater than 500 and 75 metric tons of carbon dioxide, respectively, throughout coaching. GPT-3’s huge emissions could be partly defined by the truth that it was skilled on older, much less environment friendly {hardware}. However it’s arduous to say what the figures are for sure; there isn’t any standardized strategy to measure carbon emissions, and these figures are primarily based on exterior estimates or, in Meta’s case, restricted information the corporate launched.

“Our objective was to go above and past simply the carbon emissions of the electrical energy consumed throughout coaching and to account for a bigger a part of the life cycle in an effort to assist the AI group get a greater thought of the their affect on the surroundings and the way we may start to cut back it,” says Sasha Luccioni, a researcher at Hugging Face and the paper’s lead creator.

Hugging Face’s paper units a brand new commonplace for organizations that develop AI fashions, says Emma Strubell, an assistant professor within the faculty of laptop science at Carnegie Mellon College, who wrote a seminal paper on AI’s affect on the local weather in 2019. She was not concerned on this new analysis.

The paper “represents probably the most thorough, sincere, and educated evaluation of the carbon footprint of a big ML mannequin to this point so far as I’m conscious, going into far more element … than some other paper [or] report that I do know of,” says Strubell.